Website Scrape Modernization: The Digital Transformation CTO’s Integration Playbook

Digital transformation is no longer a standalone initiative — it has become the backbone of enterprise competitiveness. In this landscape, website scrape capabilities have evolved from tactical tools into strategic sources of external intelligence.

For a CTO, the challenge is not whether to implement scraping, but how to integrate website scrape operations coherently into the broader digital transformation scraping roadmap. Modernization means redesigning processes, enforcing governance, and ensuring real scalability.

1. Website Scrape as Critical Infrastructure, Not an Isolated Tool

In many organizations, a website scrape initiative starts as an experiment. One team needs competitive pricing data, another needs market signals, and a script is developed. It works. It delivers value. But it doesn’t scale.

The issue arises when that experiment grows without architectural redesign. What began as a tactical solution becomes a mission-critical dependency without enterprise-grade standards.

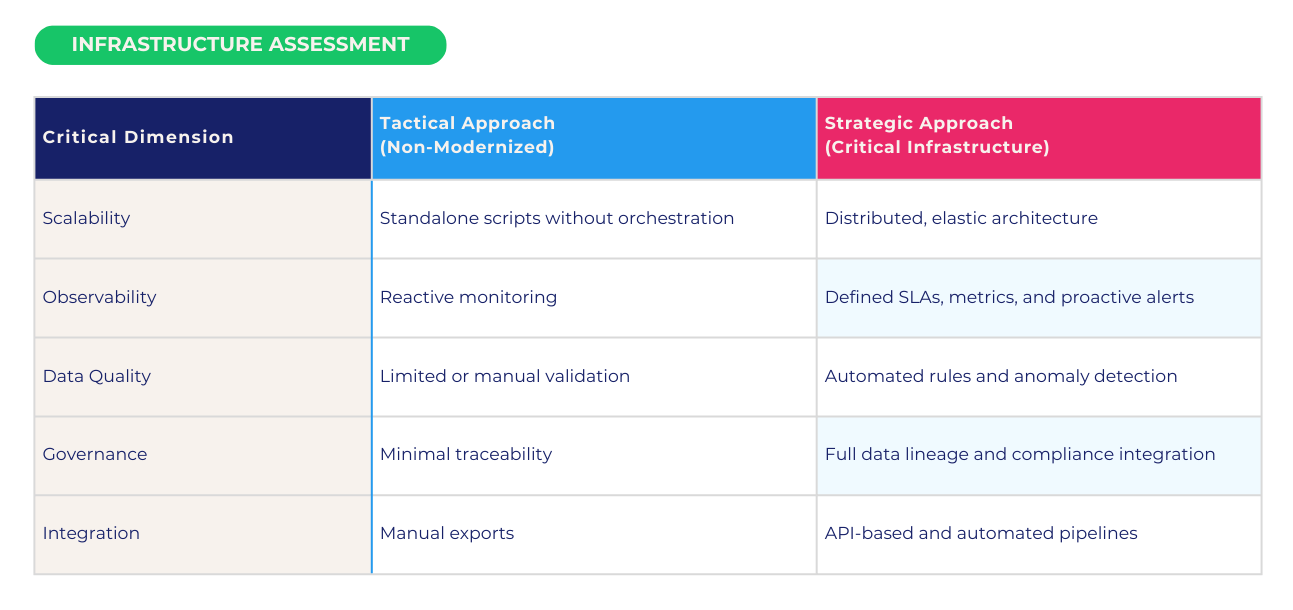

From a CTO web scraping strategy perspective, website scrape operations must meet the same standards as any core system: availability, resilience, traceability, and business alignment.

Rather than thinking in terms of “bots collecting data,” CTOs should ask: How does our website scrape operation perform against structural infrastructure criteria?

When website scrape capabilities are designed as critical infrastructure, data acquisition is decoupled from transformation and consumption, internal service-level agreements are defined, scraped data integrates directly into the enterprise data lake or warehouse, and multiple business units can reuse structured external intelligence.

This conceptual shift is central to any serious website scrape digital transformation guide. The difference between technological leadership and operational fragility often lies in this architectural decision.

2. Website Scraping Modernization in Legacy Environments

Website scraping modernization rarely happens in greenfield environments. Most large enterprises operate complex legacy systems that cannot simply be replaced. The objective is not disruption, but intelligent integration.

A structured modernization strategy addresses three architectural layers:

A. Acquisition Architecture

The digital ecosystem is dynamic: JavaScript-rendered content, frequent structural changes, and sophisticated anti-automation mechanisms require distributed infrastructure, continuous stability testing, and dynamic adjustment capabilities to maintain reliability across volatile environments. Understanding the full spectrum of data collection methods for scalable scraping architecture is essential before designing this layer.

B. Standardization and Data Quality

In enterprise environments, the core challenge is not extraction — it is usability. Modern website scrape frameworks must include semantic normalization, automated validation rules, and early anomaly detection. Without this layer, scraping introduces friction instead of competitive advantage. Learn more about data governance and lineage best practices.

C. Enterprise Integration

This is where strategic impact materializes. Modern website scrape operations must integrate through APIs, event-driven systems, or automated pipelines into pricing engines, BI platforms, financial systems, and machine learning models. A mature CTO website scraping strategy does not create data silos — it enables interoperability across the enterprise ecosystem. To understand how tooling has evolved to support this, see how web scraping tools have transformed from basic scraping to enterprise integration.

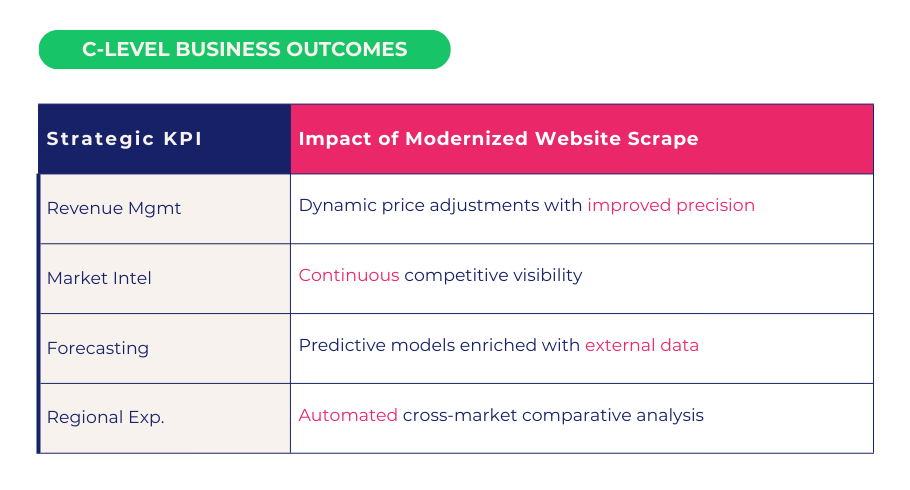

3. The Strategic Impact of Digital Transformation Scraping

Digital transformation scraping is not a marginal technical upgrade. It reshapes executive decision-making velocity and precision. When website scrape infrastructure is fully integrated, its impact becomes visible across three dimensions: speed (reduced time-to-insight), accuracy (decisions based on continuously updated external intelligence), and scale (ability to operate across multiple markets simultaneously).

For C-level executives, the value translates into measurable business outcomes. In highly competitive industries, structured external intelligence — powered by tools like Google BigQuery for enterprise data warehousing — often determines margin performance. Website scrape operations move from technical support function to strategic competitive lever.

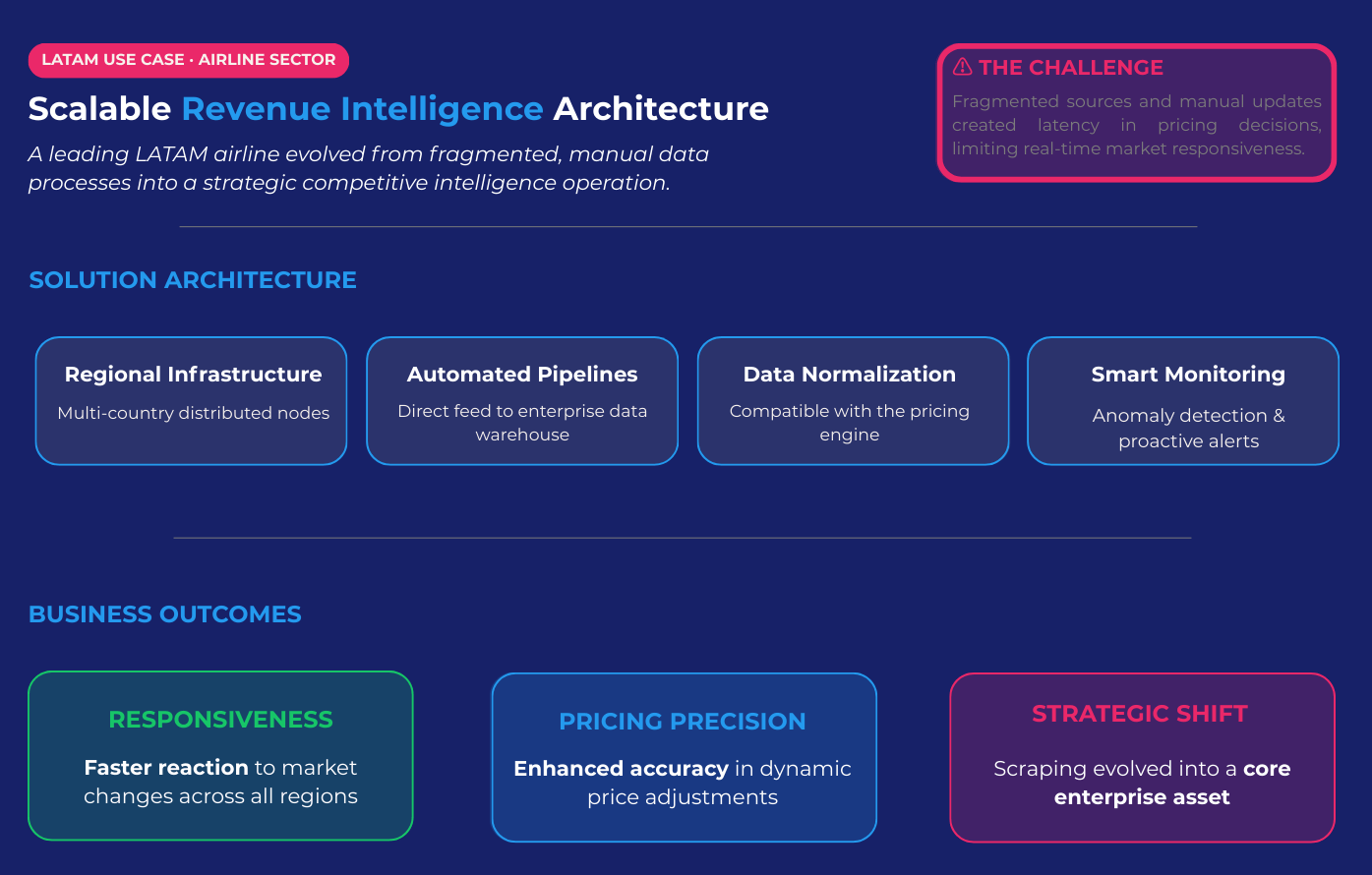

4. LATAM Use Case: Scalable Revenue Intelligence Architecture

A leading LATAM airline needed to transform its competitive monitoring capabilities. Its revenue management processes relied on fragmented external sources and manual updates, creating latency in pricing decisions.

Scraping Pros designed and deployed a website scrape architecture aligned with its digital transformation scraping strategy, including regionally distributed infrastructure for multi-country coverage, automated pipelines into the enterprise data warehouse, advanced normalization compatible with the pricing engine, and continuous monitoring with intelligent anomaly alerts.

The results extended beyond operational efficiency. The airline significantly improved its responsiveness to market changes and enhanced pricing precision. Website scrape operations evolved from a manual support task into a core strategic asset within its enterprise data architecture. For further context, Gartner’s data analytics research consistently highlights this shift as a key differentiator in high-competition industries. If you’re evaluating which enterprise scraping solution best fits this kind of architecture, our enterprise web scraping solution comparison breaks down the key options.

Conclusion: Architecture Before Automation

The distinction between companies that merely extract data and those that lead their markets lies in architecture.

When website scrape operations are embedded within a robust digital transformation scraping strategy, they become critical infrastructure that enables faster, more accurate, and more scalable decisions.

For the modern CTO, the question is no longer technical. It is strategic:

Are we treating website scrape capabilities as isolated tools — or as structural components of our digital transformation?

At Scraping Pros, we believe the answer defines long-term competitive advantage.

Frequently Asked Questions (FAQs)

1. What is website scrape modernization and why does it matter for enterprise digital transformation?

Website scrape modernization refers to redesigning scraping operations as scalable, governed, and fully integrated infrastructure rather than isolated scripts.

For enterprises undergoing digital transformation scraping initiatives, modernization ensures that external data flows reliably into core systems (data warehouses, pricing engines, BI tools) with defined SLAs, observability, and compliance controls. Without modernization, scraping becomes a fragile dependency instead of a strategic asset.

2. How does a CTO define an effective website scraping strategy?

A strong CTO web scraping strategy focuses on architecture before automation. It should include:

- Distributed and resilient acquisition infrastructure

- Automated data validation and anomaly detection

- Clear governance and data lineage

- Seamless integration with enterprise systems

The objective is not just to collect data, but to transform website scrape operations into reusable, scalable intelligence pipelines aligned with business KPIs.

3. How can website scrape operations integrate with legacy systems?

Website scrape integration with legacy systems should be handled through APIs, event-driven pipelines, or middleware layers that decouple acquisition from consumption.

Rather than replacing legacy platforms, modernization connects structured external intelligence into ERP, pricing engines, BI dashboards, and analytics environments — minimizing disruption while increasing strategic value.

4. What business impact can digital transformation scraping generate?

When properly implemented, digital transformation scraping can directly impact:

- Revenue optimization through dynamic pricing

- Competitive intelligence in near real time

- Improved forecasting accuracy

- Faster executive decision-making

The strategic value lies in reducing time-to-insight and increasing decision precision across markets.

5. How do you ensure compliance and risk mitigation in enterprise website scrape projects?

Enterprise-grade website scrape initiatives must incorporate compliance-by-design principles, including clear data governance policies, legal review aligned with frameworks like GDPR, transparent data lineage and traceability, and security controls aligned with enterprise standards. Modern website scraping modernization is not just a technical exercise — it is a controlled, governed process aligned with corporate risk frameworks.