In today’s digital economy, external data is no longer an optional asset—it is the engine of strategic decision-making. From market intelligence to supply chain visibility, public web data has become the backbone of modern data infrastructure. As a result, web scraping has evolved from a niche technical practice into a mission-critical business component.

However, this growth brings a new level of scrutiny. Regulators, legal teams, and corporate governance leaders are now closely examining how digital information is collected and utilized. What was once a purely technical process now sits at the critical intersection of transparency, privacy, and corporate responsibility.

For enterprise-scale organizations, the value of data extraction is no longer in question. The real challenge lies in execution: can these operations be implemented in full alignment with web scraping compliance, business ethics scraping, and regulatory web scraping expectations? Navigating the evolving web scraping compliance legal framework is now a prerequisite for any sustainable data strategy.

At Scraping Pros, we see a fundamental shift: the companies successfully scaling their data capabilities are those that treat scraping not as a mere tool, but as a governed capability embedded within their risk management frameworks. Building systems that generate competitive intelligence requires a perspective driven by ethics, ensuring long-term regulatory alignment and operational integrity.

The Evolution of Web Scraping in Enterprise Strategy

Web scraping began as a technical workaround. Developers created scripts capable of collecting information from websites that were originally designed to be read by humans rather than machines. These scripts automated tasks such as aggregating product prices, monitoring news sources, or compiling job listings.

For many years, these applications were relatively small in scale and often operated within isolated teams. But as companies recognized the strategic value of external data, scraping systems grew in complexity and importance.

Today, enterprises use automated data collection to monitor markets in real time. Pricing algorithms rely on competitor data. Investment firms analyze industry signals gathered from public websites. Logistics companies track service availability across thousands of providers. Retailers monitor product assortments across multiple regions.

This transformation has changed the nature of scraping itself. What once functioned as a developer’s script has become an operational capability integrated into enterprise analytics platforms.

With that integration comes responsibility. Corporate leadership increasingly expects that any data acquisition method—internal or external—must meet the same standards of governance, accountability, and web scraping compliance.

In other words, web scraping is no longer just about extracting information. It is about doing so responsibly.

Officer Must Master

Why Business Ethics Now Matter in Web Scraping

As digital ecosystems become more interconnected, the ethical dimension of data collection has moved into the spotlight. Organizations must consider not only whether data is accessible but also whether its acquisition respects broader principles of digital responsibility.

This is where business ethics scraping enters the conversation.

Ethical scraping practices recognize that websites are part of shared digital infrastructure. Excessive or poorly designed scraping activity can create unnecessary load on external systems or extract information in ways that violate user expectations. The GDPR has been particularly influential in shaping how enterprises approach data responsibility globally.

Responsible organizations therefore adopt guidelines that ensure scraping activities remain proportional, transparent, and aligned with legitimate business purposes.

Several principles tend to guide ethical scraping strategies:

- Purpose limitation. Data should be collected only when it supports a defined business objective.

- Data minimization. Systems should extract only the fields required for analysis rather than capturing entire pages indiscriminately.

- Operational transparency. Companies should maintain internal documentation describing where data comes from and how it is collected.

- Responsible request patterns. Automated systems must avoid generating excessive traffic that could disrupt target websites.

These principles reflect a broader shift toward responsible digital conduct. In the same way that companies now evaluate environmental impact or supply chain ethics, they are beginning to evaluate the ethical implications of their data acquisition strategies.

Scraping, in this context, becomes part of a larger conversation about digital market responsibility.

Understanding Web Scraping Compliance

Ethics alone, however, are not sufficient. Organizations must also address the regulatory and legal frameworks that govern data collection practices.

The concept of web scraping compliance refers to the structured processes companies use to ensure that automated data extraction aligns with legal obligations and corporate policies.

Compliance strategies typically begin with an assessment of several key factors:

- the nature of the data being collected

- the accessibility of the source website

- the jurisdictions in which the organization operates

- the intended use of the collected information

Publicly available data may often be collected under certain conditions, but companies must still evaluate how privacy regulations, contractual terms of service, and regional laws influence their operations. For updated guidance on international data regulations, the International Association of Privacy Professionals (IAPP) is a leading reference.

Because these factors vary significantly across markets, global organizations typically implement internal web scraping compliance frameworks designed specifically for scraping initiatives.

Such frameworks often include formal approval processes, documentation requirements, and periodic legal reviews.

The goal is not to slow innovation but to ensure that data acquisition activities remain aligned with regulatory expectations as the organization scales.

Compliance by Design: Building Responsible Scraping Architectures

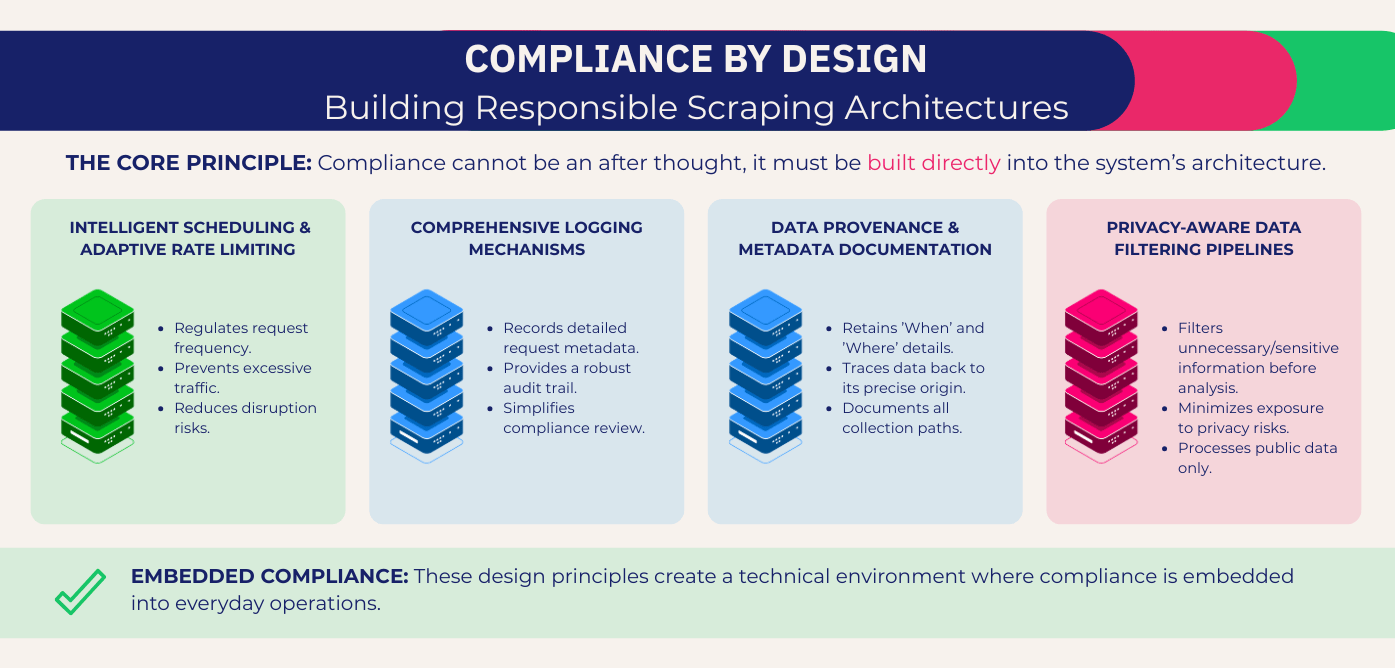

One of the most important lessons organizations learn as their scraping initiatives mature is that web scraping compliance cannot be treated as an afterthought. It must be built directly into the architecture of the system.

A compliance-oriented scraping infrastructure integrates governance principles into its technical design. This approach ensures that operational practices automatically align with policy guidelines rather than relying on manual oversight.

Several architectural elements play an important role in this process.

First, responsible scraping systems regulate how frequently requests are sent to external websites. Intelligent scheduling and adaptive rate limiting prevent excessive traffic and reduce the risk of disrupting target platforms.

Second, enterprise-grade scraping platforms implement detailed logging mechanisms that record every automated request. These logs provide an audit trail that compliance teams can review if questions arise about data sources or collection practices.

Third, modern architectures emphasize data provenance. Each dataset should retain metadata indicating when and where the information was collected. This documentation makes it possible to trace data back to its origin.

Finally, privacy-aware pipelines filter out unnecessary or potentially sensitive information before it enters analytics systems. By applying these filters early in the extraction process, companies minimize exposure to privacy risks.

Together, these design principles create a technical environment in which web scraping compliance is embedded into everyday operations.

Governance and the Role of Compliance Officers

As scraping becomes more integrated into enterprise decision-making, compliance officers play an increasingly important role in shaping data acquisition policies.

Traditionally, compliance teams focused primarily on internal financial controls or regulatory reporting. Today, their responsibilities extend into areas such as digital data practices and technology governance.

Web scraping initiatives therefore benefit from cross-functional collaboration between technology leaders and compliance professionals.

Compliance officers help organizations define acceptable data sources, document risk assessments, and establish guidelines for how scraped data can be used within the company. They also ensure that data collection practices remain aligned with broader corporate governance policies.

This collaboration often leads to the creation of web scraping compliance frameworks—structured policies that define the rules governing automated data extraction.

Such frameworks typically address issues such as documentation requirements, legal reviews of data sources, and procedures for responding to regulatory inquiries.

When implemented effectively, these frameworks transform scraping from a loosely managed technical activity into a formally governed business capability. To explore more topics around enterprise data strategy, visit our web scraping blog.

Case Study: Retail Intelligence in Latin America

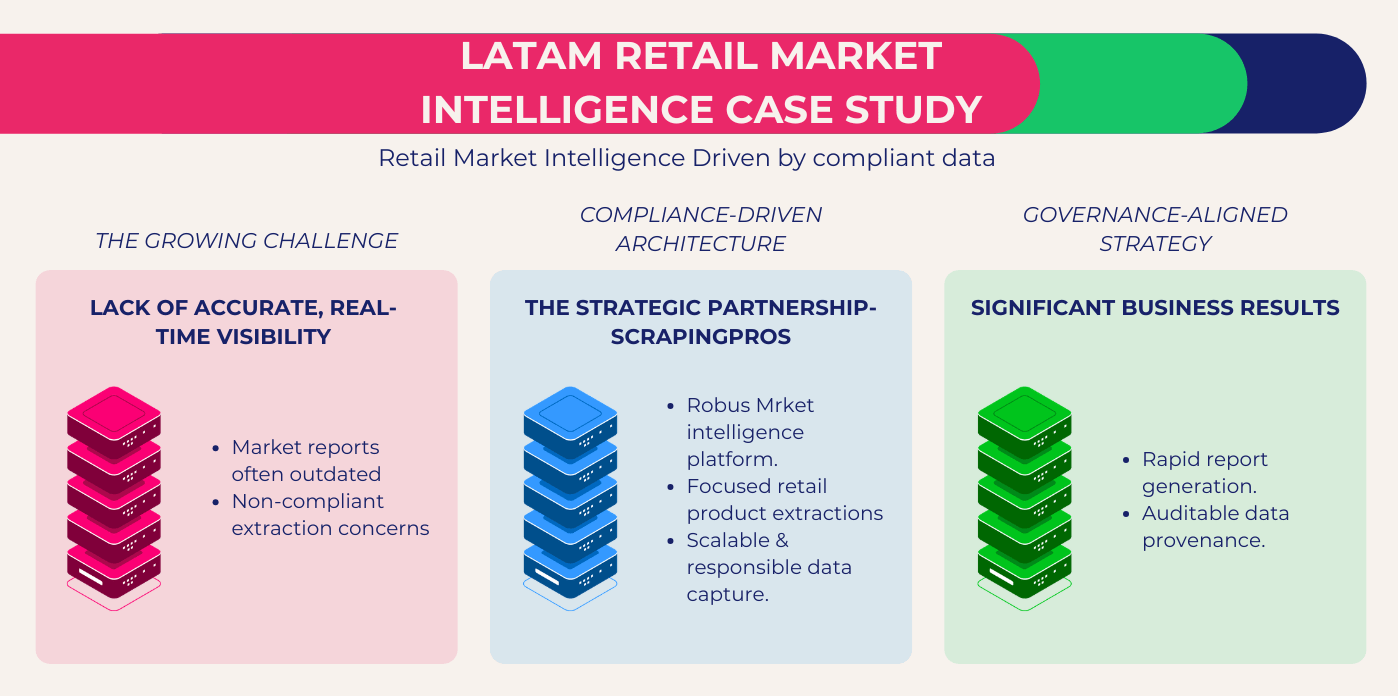

A major retail group operating across several Latin American markets faced a growing challenge. The company needed accurate, real-time visibility into competitor pricing across thousands of products, but manual monitoring had become increasingly impractical.

Each regional market operated different online storefronts, and price changes occurred frequently. By the time analysts compiled weekly reports, the data was often outdated.

The company initially experimented with basic internal scraping tools. While these scripts provided some insights, they lacked the reliability and governance required for enterprise use. Legal and compliance teams were particularly concerned about the lack of documentation surrounding data sources and request patterns.

Scraping Pros partnered with the organization to develop a compliance-oriented market intelligence platform.

The project began with a strategic assessment of the company’s data needs. Instead of collecting entire product pages, the extraction process focused specifically on relevant fields such as product identifiers, price points, and availability indicators.

Next, a scalable scraping architecture was deployed across multiple regional domains. The system incorporated controlled request rates and adaptive scheduling to ensure responsible interaction with external websites.

Every dataset collected through the system included detailed metadata describing the source, timestamp, and extraction method.

Within the first eight months of operation, the results were significant. The platform monitored pricing data from more than 120 competing retailers across five countries, covering over 2 million product records.

Analysts who previously spent days compiling price comparisons could now generate competitive reports in minutes. Internal studies estimated that the automated system reduced manual research time by approximately 65 percent.

Equally important, the company’s compliance team gained full visibility into how external data was being collected. The new infrastructure aligned the retailer’s market intelligence capabilities with its corporate governance standards.

Why Ethical Scraping Strengthens Business Strategy

Organizations sometimes view compliance as a constraint on innovation. In practice, the opposite is often true.

Companies that build strong governance frameworks around their web scraping compliance strategies gain a significant advantage: they can scale confidently.

When scraping operations are transparent, documented, and ethically designed, executives are more willing to invest in expanding them. Data teams gain access to richer external information, and strategic decisions can be based on more reliable market signals.

Ethical scraping also strengthens relationships with partners and regulators. Companies that demonstrate responsible data practices are less likely to face reputational challenges and more likely to maintain long-term trust within their industries.

In a digital economy where data flows continuously across platforms and borders, trust has become an essential component of competitive advantage.

The Future of Regulatory Web Scraping

Looking ahead, it is clear that scrutiny of digital data practices will continue to grow. Governments around the world are introducing new privacy laws, and regulators are paying closer attention to how companies collect and process publicly available information.

This trend does not mean that scraping will become obsolete. On the contrary, external data will remain vital for business intelligence and market transparency.

What will change is the standard by which scraping systems are evaluated.

Organizations will increasingly be expected to demonstrate that their web scraping compliance methods follow responsible guidelines. Documentation, traceability, and compliance oversight will become standard components of enterprise scraping infrastructures.

Companies that adapt early to this reality will find themselves better prepared for future regulatory environments.

Conclusion

Web scraping has evolved into a foundational tool for organizations seeking to understand markets and respond to competitive pressures. Yet as its strategic importance grows, so does the responsibility associated with its use.

The future of automated data extraction will not be defined solely by technological capability. It will also depend on the ethical and web scraping compliance frameworks that guide how data is collected.

By implementing web scraping compliance strategies, embedding governance into technical architectures, and aligning data practices with corporate ethics, organizations can transform scraping into a sustainable and trustworthy source of market intelligence.

At Scraping Pros, we believe that responsible data acquisition is the foundation of long-term digital strategy. Companies that invest in ethical and compliant scraping infrastructures today will be the ones best equipped to navigate the data-driven economy of tomorrow.

FAQs: Frequently Asked Questions

1. Is web scraping legal for businesses?

In many jurisdictions, web scraping can be legal when it involves publicly available information and complies with applicable laws and website policies. However, legality often depends on factors such as the type of data collected, the jurisdiction involved, and how the data is used. Organizations should implement structured compliance reviews before launching large-scale scraping initiatives.

2. What is web scraping compliance? Web scraping compliance

refers to the set of legal, technical, and governance practices that ensure automated data collection aligns with regulatory requirements and corporate policies. This typically includes documentation of data sources, controlled request patterns, legal reviews, and clear governance over how collected data is used within the organization.

3. What are ethical web scraping practices?

Ethical web scraping focuses on responsible data collection that respects the stability of external platforms and the broader digital ecosystem. Best practices generally include limiting request frequency, collecting only necessary data fields, maintaining transparency about data sources, and ensuring that scraping activity does not disrupt target websites.

4. Why should compliance officers be involved in web scraping projects?

As scraping becomes a core data acquisition strategy, compliance officers help ensure that automated data collection aligns with regulatory obligations and corporate governance standards. Their involvement reduces legal exposure, improves documentation practices, and creates internal oversight for how external data is obtained and used.

5. How can companies build a compliant web scraping infrastructure?

A compliant scraping infrastructure typically includes technical controls such as rate limiting, request logging, and data provenance tracking. Organizations also implement governance processes such as legal assessments of sources, internal documentation, and cross-team collaboration between engineering, legal, and compliance departments.

6. What risks can arise from poorly managed web scraping?

Without proper governance, scraping initiatives may expose organizations to legal uncertainty, reputational risk, or operational issues if target websites are overwhelmed by automated traffic. Lack of documentation can also make it difficult to demonstrate web scraping compliance if regulators or partners raise questions about data acquisition methods.

7. How does ethical web scraping support long-term business strategy?

Ethical scraping allows organizations to scale their data intelligence capabilities while maintaining trust with regulators, partners, and customers. Companies that embed responsible data practices into their infrastructure can expand their analytics capabilities with greater confidence and lower regulatory risk.

8. Why are enterprises investing more in regulatory web scraping frameworks?

As data becomes central to decision-making, enterprises are recognizing that external data collection must follow the same governance standards as internal data management. Regulatory web scraping compliance frameworks provide structure, transparency, and risk management, enabling companies to scale market intelligence responsibly.