Serverless Web Scraping: 5 Proven Strategies for ML-Driven Auto-Scaling

Serverless web scraping has fundamentally changed how organizations approach data extraction infrastructure. There is a specific kind of waste that is easy to ignore because it happens quietly, on servers nobody looks at unless something breaks. You provision infrastructure for peak demand. Peak demand arrives maybe 20% of the time. The other 80%, those machines sit warm and ready, billing you for the privilege of their readiness.

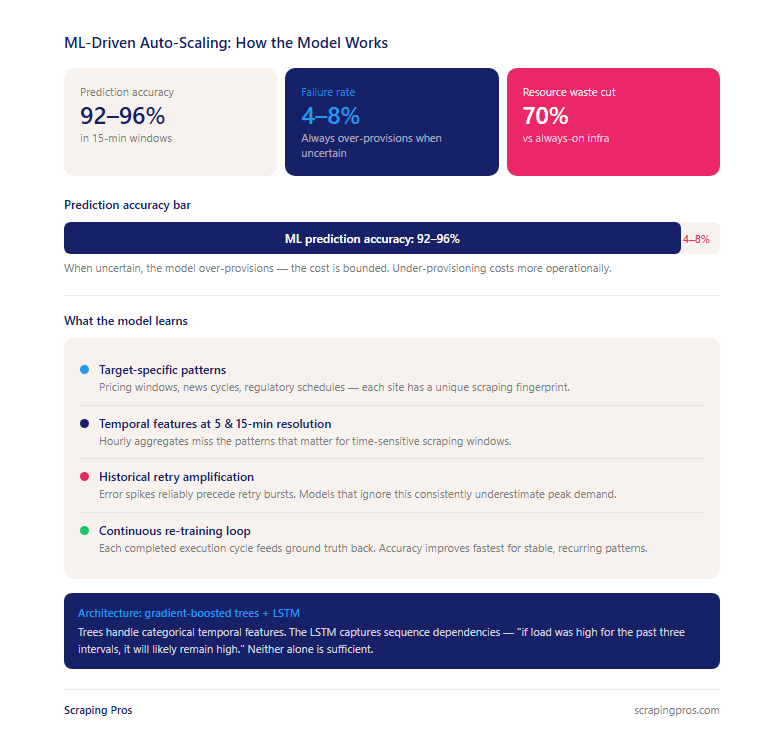

That tradeoff has shifted — not because cloud computing got cheaper (it did), but because machine learning made it possible to stop reacting to demand and start anticipating it. When your serverless web scraping infrastructure can predict with 92–96% accuracy what load is coming in the next 15 minutes, the calculus of always-on servers stops making economic sense.

1. Serverless Web Scraping: Architecture Benefits for Data Extraction

In a Function-as-a-Service (FaaS) model, serverless web scraping logic executes in response to an event — a queue message, an HTTP trigger, a scheduled invocation — and the execution environment is provisioned on demand and released when execution completes. You are not paying for idle time. You are not managing a worker fleet. Platforms like and handle everything else.

The fundamental pattern is queue-based fan-out. A coordinator function decomposes jobs into individual URLs, writes each to a message queue (, Pub/Sub, Azure Service Bus), and independent worker functions consume from that queue. When 10,000 URLs arrive simultaneously, 10,000 invocations run in parallel. When the queue drains, they terminate. You pay for exactly those scrapes and nothing more.

Where the complexity lives: Stateless execution means you cannot rely on in-memory session state between invocations. Authentication tokens, cookies, and browser fingerprints must be stored externally — typically or DynamoDB with a short TTL — and retrieved at the start of each invocation. Platform time limits (AWS Lambda caps at 15 minutes) require long-running browser jobs to be broken into checkpointed stages. More code than a single script, but the result is infrastructure that is intrinsically retryable and observable.

Our is built on this serverless-first architecture, enabling clients to run enterprise-scale extractions without managing infrastructure.

2. ML-Driven Auto-Scaling Predictions: How the Model Works

Traditional auto-scaling is reactive: CPU hits 70%, a new instance spins up. By the time the response is in place, the spike has either subsided or caused degradation. For bursty, time-sensitive scraping workloads — pricing data before market open, inventory during a flash sale, news feeds during a breaking event — reactive scaling is consistently late.

Predictive scaling learns the patterns in your workload and provisions capacity before demand arrives. According to , proactive capacity management reduces latency spikes by up to 40% compared to reactive approaches.

The features that drive accuracy for auto-scaling scraping are different from those of a general-purpose API:

- Target-specific patterns: Each website has a fingerprint — pricing update windows, regulatory publication schedules, news cycle bursts — of when it is worth scraping and how much load that implies.

- Temporal features at multiple resolutions: 5- and 15-minute intervals within the hour reveal patterns that hourly aggregates obscure.

- Historical retry rates: Elevated error periods reliably precede retry bursts. A model that ignores retry amplification consistently underestimates peak demand.

In production at Scraping Pros, the model combines gradient-boosted trees for categorical temporal features with a lightweight LSTM layer for sequence dependencies. Prediction accuracy runs 92–96% in 15-minute windows.

On the 4–8% failure rate: The failure modes are not symmetric. Under-provisioning creates queue backlog, increases latency, and causes time-sensitive scrapes to miss their windows. Over-provisioning wastes some money but causes no operational harm. The model is deliberately tuned toward over-provision when uncertain

3. Cost Efficiency Analysis

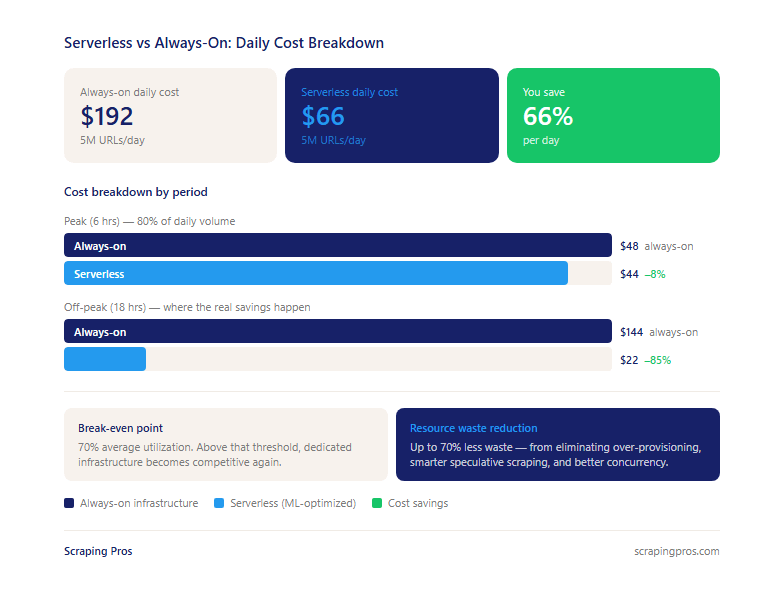

For workloads with defined peak windows, serverless web scraping saves 60–75% compared to always-on infrastructure. The mechanism is simple: you pay only for compute actually used.

For a typical operation scraping 5 million URLs/day with 80% of volume in a 6-hour window:

| Period | Always-on | Serverless | Difference |

|---|---|---|---|

| Peak (6 hrs) | $48 | $44 | –8% |

| Off-peak | $144 | $22 | –85% |

| Daily total | $192 | $66 | –66% |

Off-peak savings dominate. The break-even is approximately 70% average utilization — above that, dedicated infrastructure starts to compete. Most real-world scraping operations run well below 70%.

Hidden costs to factor in: egress fees for high-volume HTML extraction, session store infrastructure (Redis or equivalent), and retry amplification in queue costs. After accounting for all of them, the 60–75% savings figure holds for typical workloads. Resource waste reduction reaches up to 70% — driven by eliminating over-provisioning, more disciplined speculative scraping, and better concurrency utilization.

For clients running or , this cost structure makes continuous scraping economically viable at scales that were previously prohibitive.

4. Cold Start Latency: The Problem Benchmarks Don’t Capture

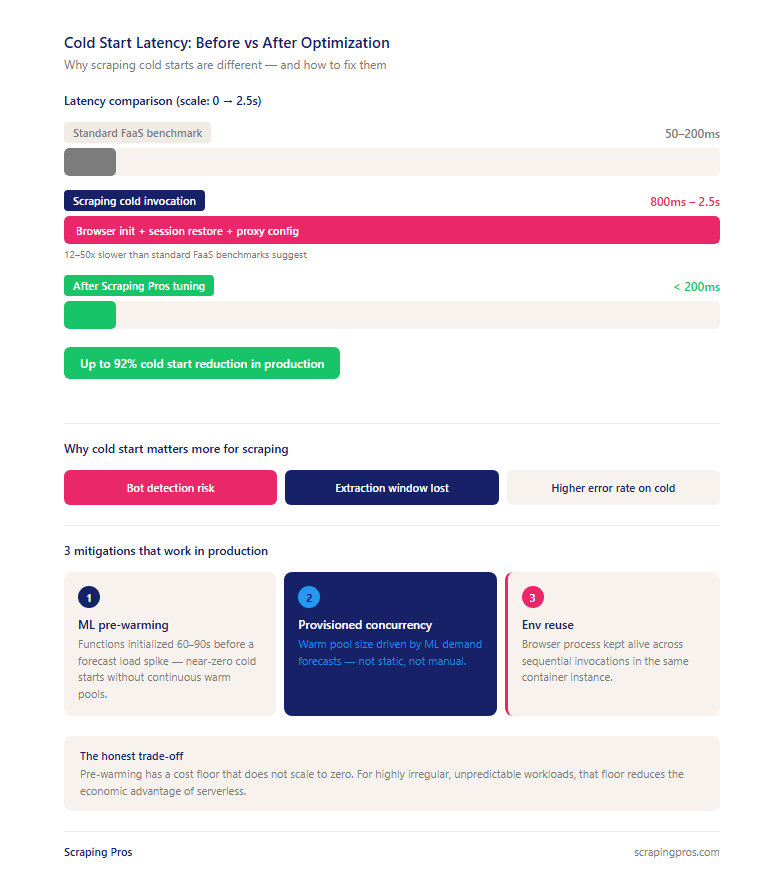

Standard FaaS benchmarks measure cold start for lightweight functions: typically 50–200ms. Scraping functions are not lightweight. Initializing a headless Chromium instance, restoring session state from an external store, and configuring proxy routing adds 800ms–2.5s per cold invocation.

This matters specifically because some websites implement session validation that detects slow browser initialization, cold starts consume a meaningful fraction of time-sensitive extraction windows, and cold invocations have measurably higher bot-detection rates than warm ones.

The three mitigations that work in production:

- ML-predicted pre-warming: The scaling model triggers function initialization 60–90 seconds before a forecast load increase — near-zero cold starts without the cost of continuous provisioned concurrency.

- Provisioned concurrency: A pre-initialized warm pool sized by ML demand forecasts covers steady baseline load.

- Execution environment reuse: Keeping the browser process alive across sequential invocations within the same container reduces per-invocation cold start cost even when the function is technically fresh.

Combined, these bring cold start impact below 200ms for the majority of production invocations.

5. Production Architecture: A Reference Design

Ingestion and coordination: A coordinator service decomposes scraping jobs into individual work units — each URL becomes a queue message with metadata: target identifier, session requirements, priority, freshness deadline, retry count.

Scaling prediction: The ML infrastructure optimization model runs as a separate service, consuming queue depth metrics and historical execution data to maintain a rolling 15-minute demand forecast. Training runs on a separate schedule — a bad model update cannot affect in-flight scrapes.

Execution: Worker functions process one URL per invocation. Each function retrieves session state, configures proxy routing, executes the extraction, writes to the output pipeline, updates session state, and acknowledges the queue message.

Circuit breakers: When a target exceeds a 15% error rate over a 5-minute window, scraping pauses automatically, the IP pool rotates, and the ML model reduces provisioned capacity for that target.

Key observability metrics: Queue depth and queue age matter more than CPU utilization. Per-target success rate exposes problems that aggregates hide. Cold start rate, warm start rate, and retry amplification rate are the leading indicators of cost efficiency and infrastructure health.

This architecture powers our and at global scale.

6. What Migration Actually Looks Like

Two prerequisites before anything else: externalized session state and sufficient observability (the ML model needs at minimum 2–4 weeks of historical invocation data at 5-minute granularity).

Start with your most predictable, least time-sensitive targets — batch jobs, periodic crawls, archival workloads. Run them serverless for 2–4 weeks while the model trains. Move time-sensitive workloads after the model has demonstrated accuracy on the easier ones.

Realistic timelines:

- Cost reduction appears within the first billing cycle

- Latency improvements for peak workloads lag 4–8 weeks

- Full 70% resource waste reduction is generally realized 6–8 weeks in

According to , organizations that migrate strategically — starting with predictable workloads — achieve full ROI 40% faster than those that migrate all workloads simultaneously.

7. Serverless Web Scraping: The Case for Ephemeral Infrastructure

The always-on model treats compute as an asset you maintain. Serverless web scraping treats it as a process that happens and stops. Teams running always-on infrastructure spend meaningful time on maintenance — capacity planning, instance patching, fleet health monitoring — that has no relationship to the data itself.

The ML infrastructure optimization layer extends this further. Capacity planning becomes a model inference rather than a human judgment call. The model is wrong 4–8% of the time and those errors need to be caught — but the work shifts from constant vigilance to exception-based intervention.

The next frontier is target-aware infrastructure: systems where the ML model learns not just when to scale but how — adjusting execution strategy, concurrency, and session behavior based on what each specific target responds well to.

Scraping Pros builds serverless web scraping infrastructure for organizations that need reliable, scalable, and cost-efficient data extraction at global scale. to learn how we can architect your data pipeline.

Frequently Asked Questions

-

How much does serverless web scraping cost compared to traditional always-on infrastructure?

Serverless web scraping costs 60–75% less than always-on infrastructure for workloads with defined peak windows. You pay only for compute time actually used. For a typical 5M URLs/day operation with 80% of volume in a 6-hour window, that translates to roughly $66/day serverless vs $192/day always-on. The break-even is around 70% average utilization.

-

What is ML-driven auto-scaling in web scraping and how accurate is it?

ML-driven auto-scaling predicts scraping demand before it arrives and provisions capacity proactively. Models are trained on per-target traffic patterns, temporal features at 5- and 15-minute intervals, and historical retry rates. In production at Scraping Pros, prediction accuracy runs 92–96% in 15-minute windows.

-

What causes cold start latency in serverless web scraping and how can it be reduced?

Cold starts in scraping environments add 800ms–2.5s per cold invocation due to headless browser initialization and session state restoration. The three most effective mitigations are ML-predicted pre-warming, provisioned concurrency for baseline demand, and browser process reuse across sequential invocations. Combined, these bring cold start impact below 200ms for the majority of production invocations.

-

Is serverless architecture suitable for large-scale web scraping in production?

Yes, for most production workloads. Serverless handles tens of millions of URLs per day with horizontal scalability that requires no coordination overhead. The exception: workloads running above 70% average utilization continuously, or those with sub-minute freshness requirements where residual cold start variance is unacceptable.

-

How does serverless web scraping handle anti-bot detection and IP rotation?

Each function invocation starts with a clean execution context. IP rotation is configured per-invocation at the proxy routing layer. Circuit breaker logic monitors per-target error rates in real time: when a target exceeds a 15% error threshold over 5 minutes, scraping pauses automatically, the IP pool rotates, and the ML model reduces provisioned capacity for that target.