Scrape Meaning in Legal Context: Technical Implementation of Privacy-Preserving Data Extraction

The word scrape is often used casually in technology conversations. In developer communities, it typically refers to automated systems that extract information from websites. In business environments, however, the scrape meaning becomes more complex. When organizations rely on external digital data to guide pricing strategies, monitor competitors, or evaluate markets, the act of scraping moves beyond engineering into the domains of governance, compliance, and corporate responsibility.

This shift has changed the way leading organizations approach web scraping. What once began as a technical capability has evolved into a form of infrastructure—one that must operate under legal awareness, privacy considerations, and sustainable operational principles.

Understanding the scrape meaning in legal contexts is therefore no longer optional. For CTOs, legal teams, and data leaders, it is a foundational element of modern data strategy.

At Scraping Pros, we have seen firsthand how companies that treat scraping as a governance problem—not just a technical one—are the ones able to scale their data capabilities globally without creating regulatory exposure.

Scrape Meaning: From Technical Term to Legal Concept

Historically, the term scraping emerged from engineering culture. Developers built scripts to extract information from web pages that were originally designed for human reading. These scripts automated repetitive tasks: collecting product prices, aggregating job listings, or monitoring news updates. For a deeper look at how these tools have evolved, see our guide on Web Scraping Tool Evolution.

Technically speaking, the definition remains straightforward: scraping refers to the automated extraction of publicly available information from web interfaces.

But in enterprise environments, that scrape meaning is incomplete.

When scraping becomes part of corporate decision-making systems, it intersects with multiple legal and operational frameworks. Data acquisition is no longer just a technical process; it becomes part of the organization’s governance architecture.

The scrape meaning legal interpretation depends on several contextual elements that define whether a scraping implementation can be considered responsible and compliant.

Among the most relevant factors are:

- Nature of the data being accessed. Public product information, aggregated statistics, or financial indicators are treated very differently from personal data or user-generated content.

- Method of access. Whether the data is collected from openly accessible pages or from areas that require authentication can change the legal interpretation of scraping activities.

- Purpose of the extraction. Competitive intelligence, market analysis, and research purposes may be evaluated differently from commercial redistribution of data.

- Jurisdiction in which the organization operates. Regulatory frameworks vary significantly between regions such as the United States, Europe, and Latin America.

For global companies operating across multiple markets, this complexity is unavoidable. Regulatory frameworks such as privacy laws, data protection rules, and contractual terms of service create a layered landscape in which scraping must operate carefully.

This is why modern enterprise strategies rarely treat scraping as an isolated tool. Instead, it is integrated into broader data governance programs that define how external information is collected, processed, and used.

The Legal Landscape of Web Scraping

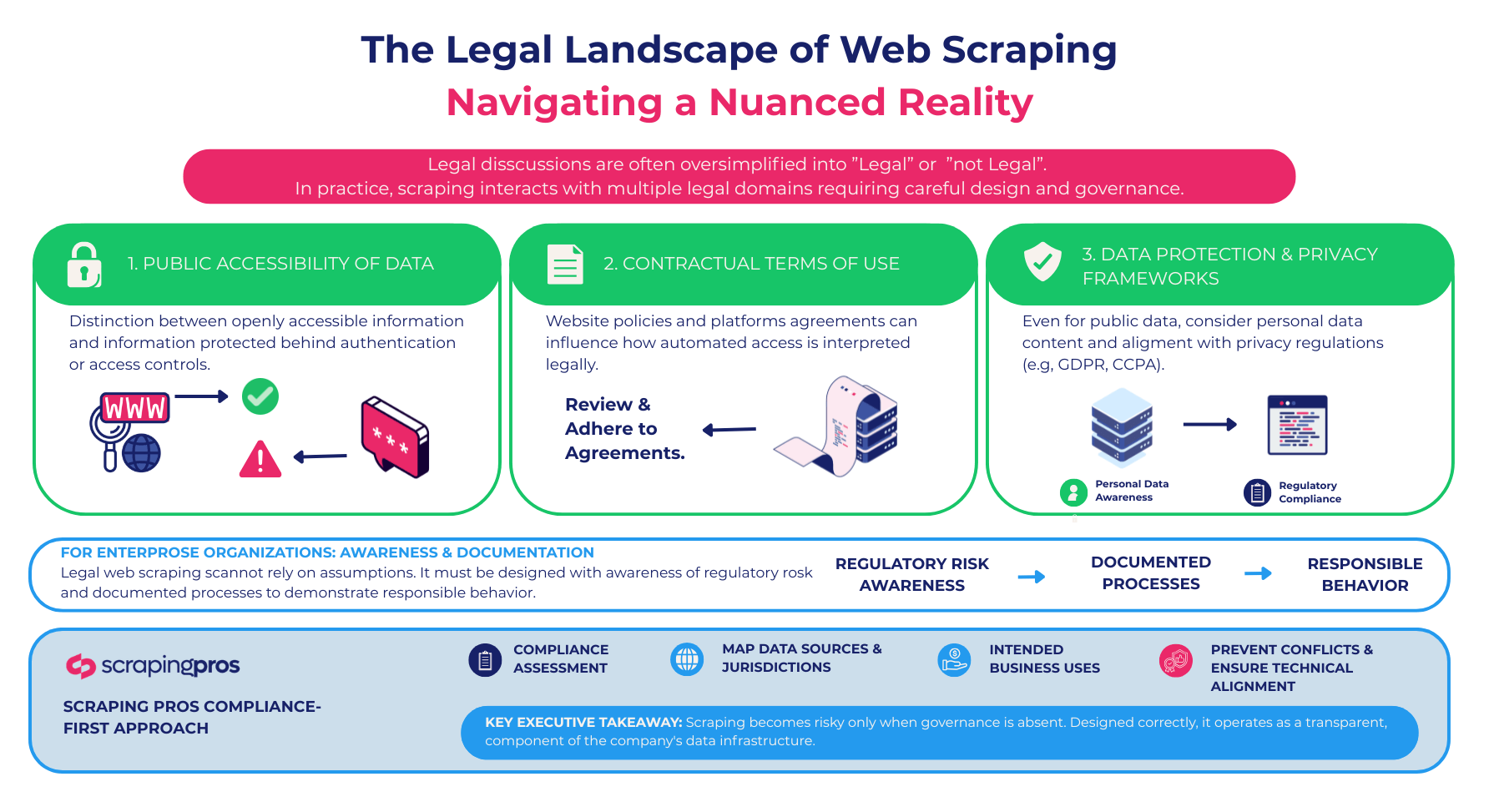

Legal discussions around web scraping are often oversimplified. Public debates tend to frame the issue in binary terms—either scraping is legal, or it is not. In practice, the reality is far more nuanced.

Scraping generally interacts with several legal domains that shape how organizations must design their data collection practices.

Three dimensions are particularly relevant for companies implementing legal web scraping strategies:

- Public accessibility of data. Many legal interpretations distinguish between information that is openly accessible on the web and information protected behind authentication or access controls.

- Contractual terms of use. Website policies and platform agreements can influence how automated access is interpreted from a legal perspective.

- Data protection and privacy frameworks. Even when information is publicly accessible, organizations must consider whether it contains personal data and whether its collection aligns with applicable data protection rules. According to established frameworks, the scrape meaning shifts significantly when personal data is involved.

For enterprise organizations, this means that compliant web scraping cannot rely on assumptions. It must be designed with awareness of regulatory risk and documented processes that demonstrate responsible behavior.

At Scraping Pros, projects frequently begin with a compliance assessment that maps data sources, jurisdictions, and intended business uses. This early-stage analysis prevents downstream conflicts and ensures that the technical architecture aligns with the organization’s legal obligations.

The key takeaway for executives is that scraping becomes risky only when governance is absent. When designed correctly, it can operate as a transparent and compliant component of the company’s data infrastructure.

Privacy-Preserving Scraping: The Next Standard

As digital ecosystems mature, the conversation around web scraping is gradually shifting away from basic legality toward a more advanced concept: privacy-preserving data extraction.

In earlier phases of the industry, many scraping initiatives focused on maximizing the volume of collected information. Data was often gathered broadly, with filtering and processing performed afterward.

This model is increasingly outdated. Organizations today are expected to minimize unnecessary data collection and implement safeguards that prevent the handling of sensitive information.

Privacy-preserving scraping architectures incorporate these safeguards at the earliest stages of the pipeline. Instead of collecting everything that appears on a webpage, the system extracts only the fields required for the specific business use case.

For example, a retail intelligence system monitoring competitor prices does not need to collect user reviews, usernames, or customer comments. By designing extraction rules that ignore these elements entirely, organizations reduce privacy exposure while improving data efficiency.

Similarly, privacy-aware pipelines may incorporate early-stage anonymization processes or automated filters that remove identifiers before data enters downstream analytics systems.

These practices transform scraping from a broad data acquisition mechanism into a precise purpose-driven information pipeline. The result is not only stronger compliance but also higher-quality datasets.

In many ways, privacy-preserving scraping mirrors the broader shift toward responsible data governance in the digital economy. Organizations that adopt these methods early are better positioned to navigate evolving regulatory expectations.

Compliant Web Scraping Architecture

Building a legally defensible scraping operation requires more than good intentions. It requires architecture designed to support transparency, traceability, and control.

Enterprise scraping infrastructures typically include several layers that reinforce compliance.

One important component is request governance. Systems must regulate how frequently data is accessed and ensure that automated activity does not create undue pressure on external infrastructures. Rate limiting, adaptive scheduling, and load-aware crawling strategies help maintain responsible interaction with target websites.

Another critical element is data provenance tracking. Every dataset collected through scraping should retain information about its source, timestamp, and extraction method. This documentation allows organizations to demonstrate where data originated and how it was obtained.

Logging mechanisms play a similar role. Detailed request logs create an auditable trail that can be reviewed by compliance teams or regulators if necessary. These records provide transparency and help identify anomalies in scraping activity.

Modern architectures also separate raw extraction from processed datasets. This separation ensures that data cleaning, normalization, and enrichment occur within controlled environments where privacy filters and governance rules can be applied.

When these components work together, scraping becomes part of a broader compliant web scraping framework. Instead of functioning as a hidden technical process, it operates as a transparent and accountable data acquisition layer.

Governance and Risk Management in Enterprise Scraping

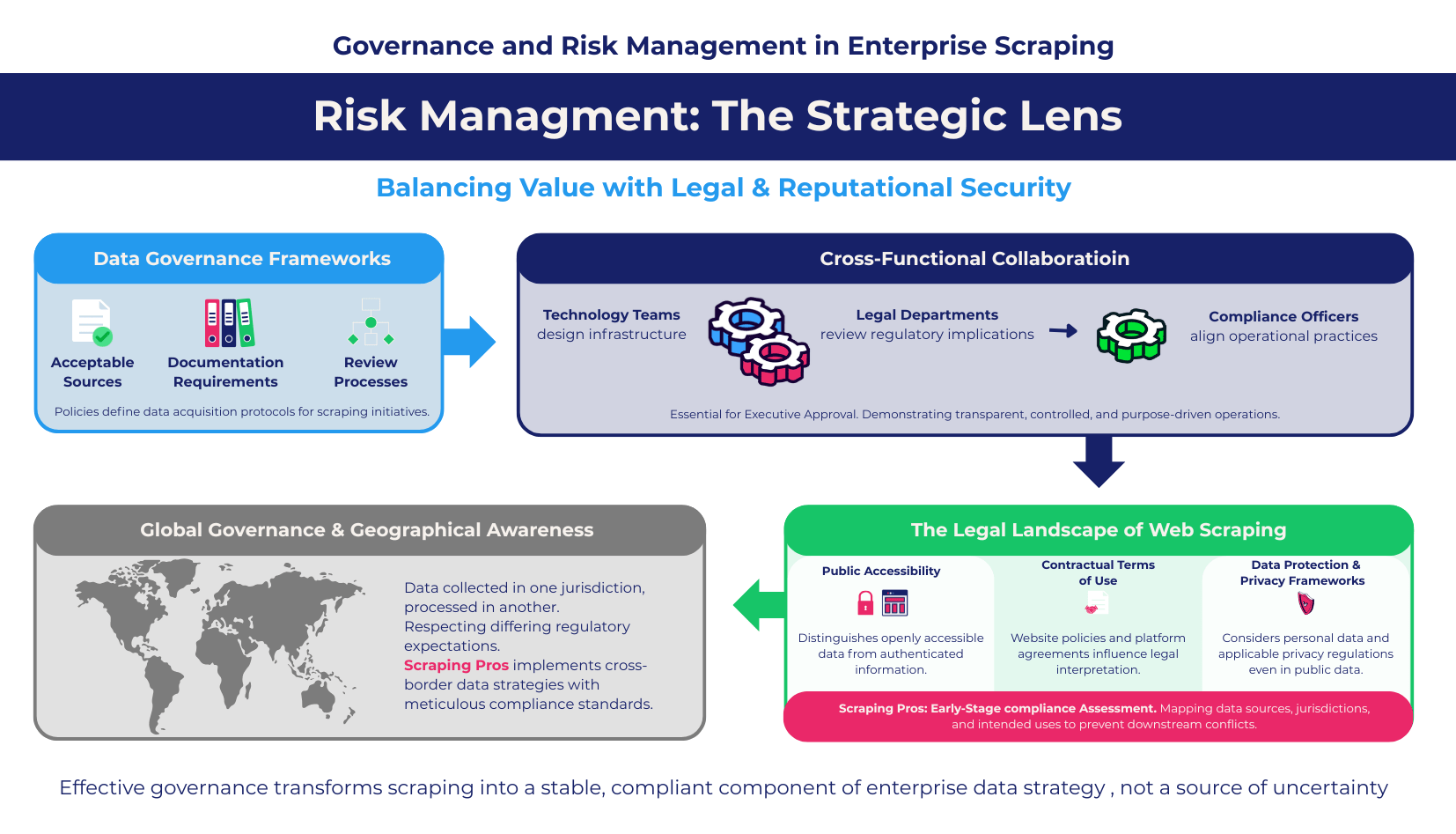

Large organizations evaluate scraping through the lens of risk management. Executives rarely question the value of external data; the strategic importance of market intelligence is widely recognized. What concerns leadership teams is the possibility that poorly designed scraping practices could expose the company to legal or reputational challenges.

This is why data governance frameworks increasingly incorporate policies governing external data acquisition. Learn more about secure web scraping for enterprise teams and how leading CISOs are structuring these frameworks. These policies define acceptable sources, documentation requirements, and review processes for scraping initiatives.

In many cases, governance structures involve collaboration between multiple departments. Technology teams design the infrastructure, legal departments review regulatory implications, and compliance officers ensure that operational practices align with corporate policies.

The presence of these cross-functional processes often determines whether a scraping project receives executive approval. When the organization can demonstrate that scraping operations are transparent, controlled, and purpose-driven, the initiative is far more likely to gain institutional support.

For global enterprises, governance also includes geographical awareness. Data collected in one jurisdiction may be processed in another, and regulatory expectations may differ across regions. Ensuring that scraping operations respect these differences requires careful planning.

Scraping Pros frequently works with companies implementing cross-border data strategies, where data collected in Latin America or Europe is integrated into analytics systems hosted in the United States. These environments require meticulous attention to compliance standards and documentation.

When governance frameworks are implemented effectively, scraping becomes a stable component of enterprise data strategy rather than a source of uncertainty.

Case Study: Financial Market Intelligence in Latin America

A fintech company operating across several Latin American markets needed to monitor publicly available information about financial products offered by competing institutions. Interest rates, service fees, and product conditions changed frequently across countries, making manual monitoring unreliable and difficult to scale.

The company initially relied on internal scraping scripts developed by its engineering team. While these tools produced useful datasets, the legal department raised concerns about documentation, regulatory exposure, and the lack of traceability in the extraction process.

Scraping Pros redesigned the system under a privacy-preserving and compliance-oriented architecture. Extraction rules were refined to capture only relevant financial product data while excluding any personal or user-generated information. At the same time, the infrastructure was rebuilt with controlled request patterns and full logging capabilities, ensuring traceability for every dataset collected.

Within the first six months of deployment, the platform delivered measurable results:

- Coverage of more than 250 financial products across five regional markets.

- Reduction of manual competitive research time by 60% for the strategy team.

- 38% decrease in infrastructure consumption thanks to optimized scraping frequency and caching.

The company now operates a regional competitive intelligence platform that aligns market monitoring with internal compliance requirements, enabling faster pricing decisions without increasing regulatory risk.

Case Study: Competitive Benchmarking in the United States

In the United States, Scraping Pros supported a logistics technology firm conducting competitive benchmarking across transportation and delivery service providers. The company needed to track pricing structures, service coverage, and operational changes across hundreds of publicly accessible websites.

Because the insights would inform strategic planning and potential acquisition evaluations, the organization required a data acquisition system capable of withstanding internal audits and compliance review.

Scraping Pros implemented a privacy-preserving web scraping architecture focused on operational transparency. Extraction pipelines were designed to collect only logistics-related information while filtering out any content that could contain personal data. At the same time, a detailed logging framework was deployed to record every automated request and create a verifiable audit trail.

The platform quickly became a strategic resource for the company’s leadership team. Within the first year:

- The system monitored more than 400 logistics service providers across the U.S. market.

- Competitive benchmarking reports were generated 70% faster compared with the previous manual research process.

- Strategy teams gained real-time visibility into pricing changes across key competitors, enabling faster market response.

The project demonstrated how responsible, compliant scraping infrastructure can transform fragmented web data into reliable strategic intelligence for executive decision-making.

The Future of Scraping: Trust as Infrastructure

The role of web scraping in the digital economy is continuing to expand. Organizations across industries rely on external information to understand markets, monitor competitors, and evaluate strategic opportunities.

At the same time, regulatory scrutiny of data practices is increasing. Companies are expected to demonstrate not only that their data is useful but also that it has been collected responsibly.

In this environment, the meaning of scraping is evolving. It is no longer simply a technical capability. It is a component of trusted data infrastructure.

Organizations that treat scraping as an engineering shortcut may struggle to adapt to this new reality. Those that design their systems with compliance, transparency, and privacy in mind will be better positioned to scale their data strategies globally.

The future of web scraping will not be defined by how much data companies can collect, but by how responsibly they collect it.

Conclusion

Understanding the scrape meaning in legal and technical contexts is essential for modern enterprises. As data becomes central to competitive strategy, the processes used to obtain that data must meet the same standards of governance applied to internal systems.

Legal web scraping is not about avoiding innovation. It is about designing innovation responsibly. By implementing privacy-preserving architectures, transparent logging systems, and robust governance frameworks, organizations can transform scraping into a reliable source of market intelligence.

At Scraping Pros, we believe that responsible data acquisition is the foundation of sustainable digital strategy. Companies that invest in compliant and privacy-aware scraping infrastructures today will be the ones capable of navigating tomorrow’s regulatory landscape with confidence.

FAQs: Scrape Meaning and Legal Web Scraping

1. What does “scrape” mean in a legal context?

In legal terms, scraping refers to the automated extraction of publicly available information from websites, performed in a way that respects applicable regulations, terms of service, and data protection laws.

2. Is web scraping legal?

Web scraping can be legal when it involves publicly accessible data and is implemented responsibly. Compliance depends on factors such as data type, access method, jurisdiction, and how the information is ultimately used.

3. What is compliant web scraping?

Compliant web scraping refers to data extraction systems designed to operate within legal and regulatory frameworks. This typically includes controlled access patterns, data provenance tracking, logging, and privacy-aware data filtering.

4. What is privacy-preserving web scraping?

Privacy-preserving scraping focuses on collecting only the data necessary for a specific business purpose while avoiding or filtering out personal or sensitive information during the extraction process.

5. Why is data governance important in web scraping?

Data governance ensures that external data collection aligns with corporate compliance standards, privacy regulations, and internal risk management policies. Without governance, scraping initiatives can expose organizations to legal and reputational risks.

6. How do companies ensure scraping compliance across different countries?

Organizations operating globally typically implement compliance-aware architectures, legal reviews of data sources, and detailed documentation of extraction processes to ensure alignment with regional regulations.

7. What industries benefit most from responsible web scraping?

Industries that rely heavily on market intelligence—such as e-commerce, finance, logistics, travel, and real estate—often use responsible scraping practices to monitor pricing, competitors, and market trends.

8. How can enterprises implement ethical and responsible web scraping?

Enterprises typically adopt structured scraping architectures that include controlled request rates, transparent logging, data minimization practices, and privacy filters to ensure responsible data extraction.

9. What is the difference between web scraping and data extraction?

Web scraping is a specific form of data extraction that collects information directly from websites using automated tools. Data extraction is a broader concept that includes retrieving information from many sources such as databases, documents, APIs, and web platforms. In enterprise environments, web scraping is often part of a larger data acquisition strategy used for market intelligence, pricing analysis, and competitive monitoring.

10. How do companies implement privacy-preserving web scraping in practice?

Companies implement privacy-preserving scraping by designing systems that collect only the data required for a defined business purpose. This typically involves filtering out personal information during extraction, limiting data collection fields, applying rate controls to minimize system impact, and maintaining detailed logs to ensure transparency and compliance with privacy regulations.