Kubernetes web scraping represents the evolution of data extraction from simple scripts to enterprise-grade systems. For years, web scraping was approached as a technical shortcut: write a script, extract data, store results. That model worked when volumes were low and sources were stable, but that context no longer exists.

Today, enterprise scraping operates in a radically different environment. Websites are dynamic, defenses evolve continuously, and data must be refreshed in near real time. The challenge is no longer extraction—it is operating a distributed system that interacts with other distributed systems under uncertainty.

The real inflection point appears when organizations move from thousands to millions of requests. At that scale, traditional web scraping services and approaches fail: jobs become brittle, infrastructure costs grow linearly, and failures propagate silently. What emerges is not a tooling problem, but a systems problem.

This is where the concept of cloud-native scraping becomes essential. In this context, Kubernetes web scraping is not an optimization layer; it is the natural foundation for managing complexity by rethinking scraping as:

-

A continuously running system, not a scheduled task.

-

A distributed workload, not a centralized process.

-

A dynamic interaction, not a deterministic execution.

1. Kubernetes as the Control Plane of Enterprise Scraping

Kubernetes is often introduced as a container orchestration platform, but in the context of Kubernetes web scraping, that definition is incomplete. At scale, Kubernetes acts as the control plane of scraping operations. It does not simply run workloads—it governs how those workloads behave under constantly changing external conditions.

A modern scraping system is composed of hundreds or thousands of independent processes: crawlers, parsers, enrichment jobs, and retry mechanisms. Each of these components must operate independently, but also coherently. You can explore more about managing these independent scraping processes in our recent articles.

Kubernetes enables this by transforming scraping tasks into what we define as ephemeral compute units. Each scraping job is:

-

Stateless or minimally stateful.

-

Disposable and replaceable.

-

Isolated from other workloads.

This design has a profound implication: resilience is no longer achieved by preventing failure, but by assuming failure and recovering instantly. Instead of asking “how do we avoid errors?”, the system is designed to answer:

-

How fast can we replace a failing process?

-

How do we redistribute workload automatically?

-

How do we prevent localized failures from scaling system-wide?

Additionally, Kubernetes web scraping introduces a level of operational separation that is critical: crawling can scale independently from parsing, high-risk domains can be isolated, and resource allocation can be adjusted dynamically based on demand. This transforms scraping from a fragile pipeline into a self-healing, adaptive system.

2. Real Orchestration Patterns for Web Scraping

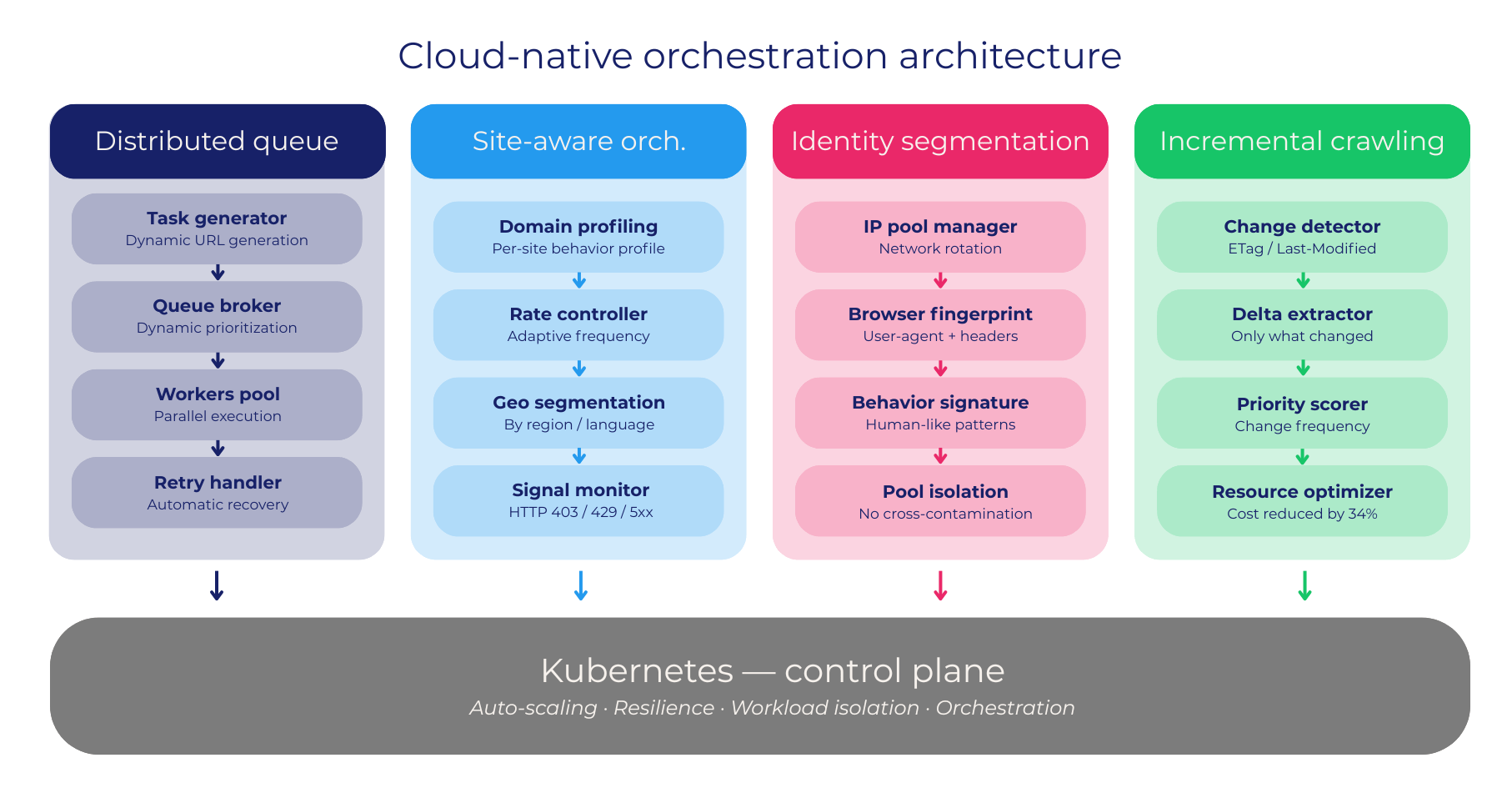

The true value of Kubernetes web scraping is not in deployment, but in orchestration patterns specifically adapted to data extraction challenges. Generic patterns used in web applications are insufficient here. At Scraping Pros, we implement orchestration models designed around the behavior of external systems rather than internal workloads.

-

Distributed Crawling Queue Model: Task generation and execution are fully decoupled. Instead of assigning static workloads, the system feeds a queue that dynamically distributes tasks across workers, allowing real-time adaptation to failures and rate limits.

-

Site-Aware Orchestration: Workloads are segmented by domain, geography, or behavior profile. This allows the system to apply differentiated strategies—adjusting frequency and concurrency based on how each site responds.

-

Identity Segmentation: In high-scale Kubernetes web scraping, identity is a combination of network, browser fingerprint, and behavioral signature. By isolating identity pools per workload, the system avoids cross-contamination and reduces systemic blocking.

-

Incremental Crawling Controllers: These prioritize changes over full reloads. Instead of reprocessing entire datasets, the system extracts only what has changed, dramatically reducing resource consumption.

What ties these patterns together is a fundamental shift: “Scraping is no longer about executing requests—it is about orchestrating interactions with external systems.”

3. Auto-Scaling as a Behavioral System, Not a Resource Trigger

Traditional scaling models rely on infrastructure metrics such as CPU or memory usage. In Kubernetes web scraping, these signals are often insufficient and misleading. The real constraints are external and behavioral; a system may have idle CPU capacity, but scaling aggressively can trigger immediate blocking or rate limiting.

This is why advanced systems implement what we define as behavior-driven auto-scaling. Instead of reacting only to internal metrics, the system continuously evaluates external signals:

-

Response latency by domain.

-

Variations in content structure.

-

Success vs. failure ratios over time.

Scaling decisions are adjusted accordingly. For example, an increase in blocking signals may trigger a controlled reduction in concurrency, while stable responses allow aggressive scaling to maximize throughput. This approach creates a feedback loop between the Kubernetes web scraping infrastructure and its environment.

The impact of this efficient resource usage is measurable:

-

Significant reduction in unnecessary retries.

-

Stabilization of success rates above 95%.

-

Cost reductions driven by smarter allocation.

-

Faster recovery from disruptions, often within seconds.

In this model, scaling is no longer about “doing more,” but about doing the right amount at the right time.

4. Case Study: Retail Intelligence Transformation in Latin America

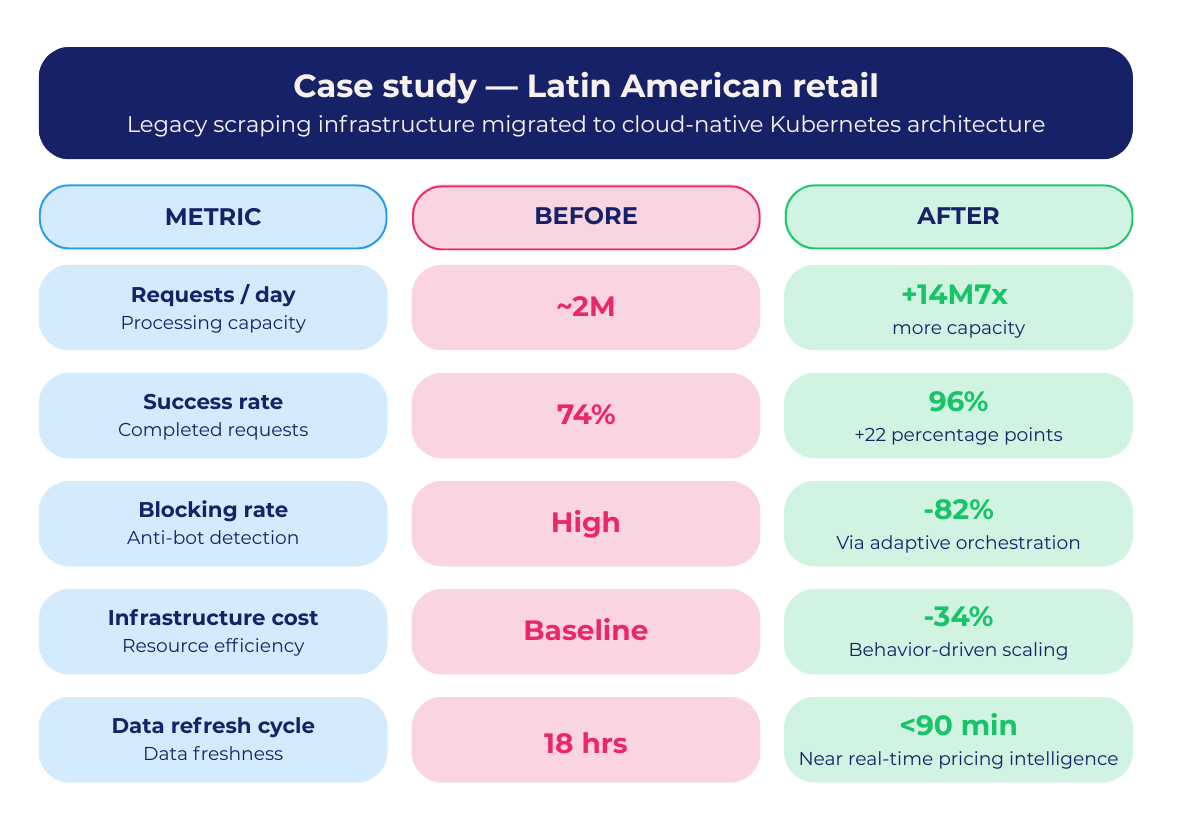

A leading retail group operating across multiple Latin American markets faced a growing challenge: their pricing intelligence depended on legacy infrastructure that struggled with scale, inconsistency, and rising operational costs.

The system relied on monolithic jobs with limited fault tolerance. As data volume increased, so did instability. Blocking rates rose and business teams lost confidence in the data. To solve this, Scraping Pros designed and implemented a cloud-native architecture based on Kubernetes web scraping orchestration.

The Solution Approach

The transition introduced a modern framework for scalable data extraction:

-

Distributed crawling with dynamic workload allocation.

-

Behavior-driven auto-scaling based on domain-level signals.

-

Segmented identity management to reduce detection.

-

Incremental data extraction to minimize unnecessary load.

-

Full observability across pipelines for real-time monitoring.

The Results

The shift to a Kubernetes web scraping model redefined how their data operations were governed:

-

Processing Capacity: Increased to over 14 million requests per day.

-

Success Rate: Improved from 74% to 96%, despite stronger anti-bot defenses.

-

Blocking Rate: Reduced by 82% through adaptive orchestration.

-

Infrastructure Costs: Decreased by 34% due to efficient resource allocation.

-

Data Refresh Cycles: Reduced from 18 hours to under 90 minutes.

Beyond these metrics, the most significant outcome was strategic. The organization moved from delayed, reactive analysis to continuous, real-time pricing intelligence, directly impacting their competitiveness in dynamic markets.

5. Closing Insight

Cloud-native web scraping is not about adopting Kubernetes as a tool. It is about embracing a new operational model where data extraction is treated as a distributed, adaptive, and business-critical system.

At Scraping Pros, this is how we approach Kubernetes web scraping at scale: not as isolated processes, but as orchestrated infrastructures designed to evolve with the complexity of the web.

Because at enterprise scale, the advantage is no longer just in extracting data—it is in how reliably, efficiently, and intelligently you operate the systems that extract it. If you want to dive deeper into these strategies, explore our full web scraping blog.

FAQs

- What is Kubernetes web scraping and why is it important for enterprises?

Kubernetes web scraping refers to running distributed data extraction workloads using container orchestration. It allows enterprises to scale scraping operations efficiently, improve fault tolerance, and manage complex, high-volume data pipelines in real time. - How does cloud-native scraping improve scalability compared to traditional methods?

Cloud-native scraping enables dynamic scaling based on workload and external signals, rather than fixed infrastructure. This reduces costs, increases reliability, and allows systems to handle millions of requests without manual intervention or redesign. - What is behavior-driven auto-scaling in web scraping?

Behavior-driven auto-scaling adjusts scraping activity based on external factors like blocking rates, response latency, and error patterns. This approach improves success rates and prevents overloading target sites, making data extraction more stable and efficient. - Why is Kubernetes a better architecture for large-scale web scraping?

Kubernetes provides self-healing, workload isolation, and automated orchestration. This allows scraping systems to recover from failures instantly, optimize resource usage, and maintain high performance even in volatile or protected environments.