In today’s data-driven economy, scalable web scraping is no longer a technical differentiator—it is a structural capability. Organizations across industries rely on large-scale data extraction to inform pricing, monitor competitors, optimize supply chains, and detect market signals in real time.

However, as companies scale, they encounter a critical inflection point: standardized solutions stop being sufficient. What worked at pilot stage becomes fragile, expensive, and difficult to govern.

This is where the real shift happens—from tools to infrastructure, and from vendors to strategic partners.

At Scraping Pros, we work with organizations that have already validated the value of data extraction and now need to operationalize it at scale, aligning technology with business outcomes, compliance requirements, and long-term sustainability.

1. Web Scraping Scalability Is Not Volume: It Is Stability Under Change

One of the most persistent misconceptions about web scraping scalability is defining it as volume. In reality, volume is only a symptom. The real challenge lies in maintaining stability as systems grow and external conditions evolve.

At small scale, inefficiencies remain hidden. At enterprise scale, they become structural risks. A slight drop in success rate can translate into millions of failed data points. Latency increases can disrupt downstream analytics. Manual interventions become operational bottlenecks.

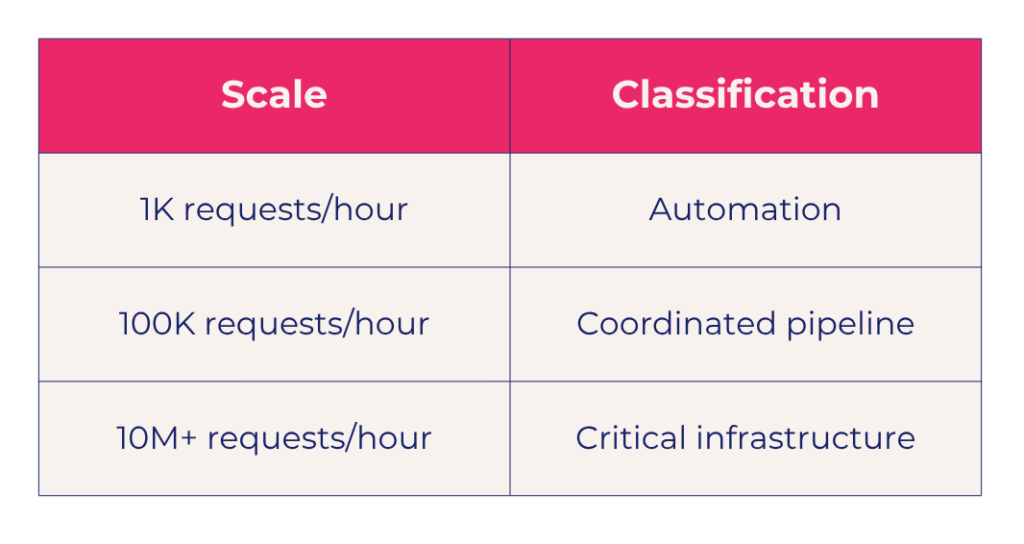

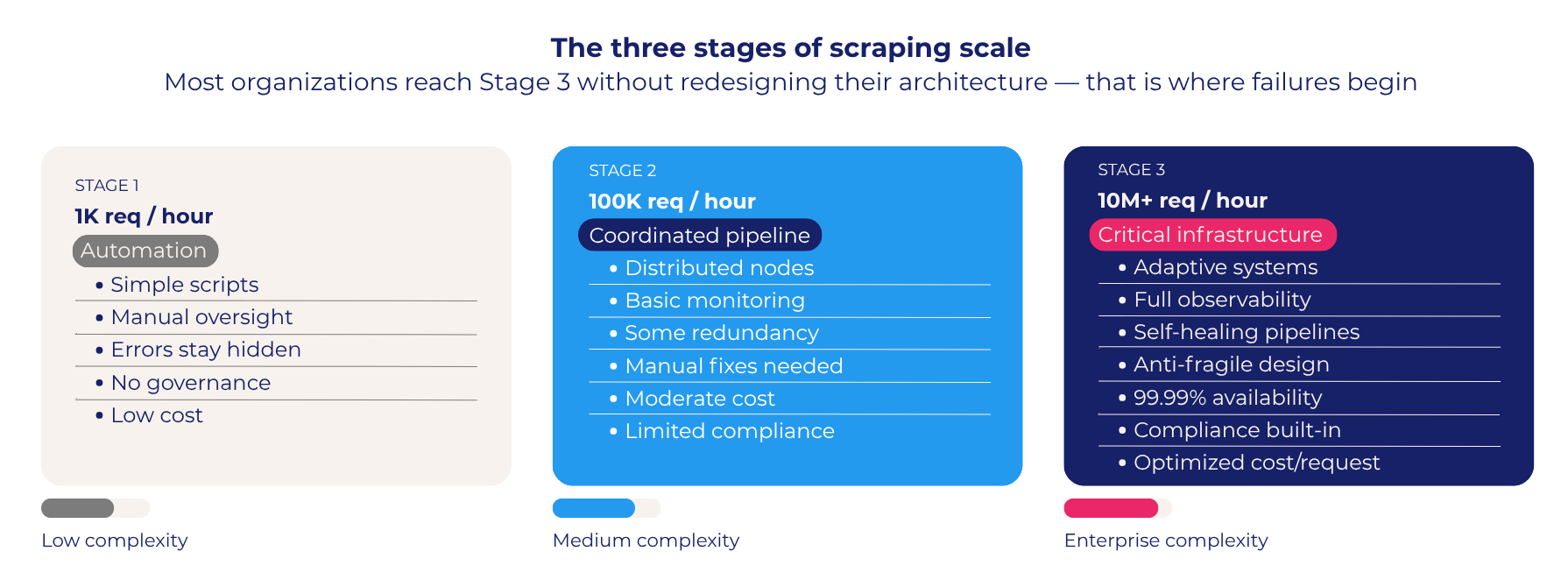

A more accurate model of high-volume data collection looks like this:

Most organizations reach this third stage without having redesigned their architecture. That is precisely where failures begin.

Scraping Pros typically enters at this point: when companies need to re-architect their enterprise scraping infrastructure to ensure stability, observability, and cost control under continuous change.

2. From Tools to Enterprise Scraping Infrastructure: The Architectural Shift

Enterprise scraping infrastructure is not about choosing a better tool—it is about designing a system that integrates seamlessly into the organization’s data ecosystem.

Traditional solutions focus on extraction. Enterprise systems focus on data flow orchestration. This includes how data is collected, validated, transformed, and delivered into internal systems such as data warehouses, BI platforms, or machine learning pipelines.

What differentiates a mature scalable web scraping architecture is not only its technical components, but its ability to align with business processes. Data must be available when decisions are made, not hours later. Pipelines must be resilient to source changes and anti-bot updates. And systems must be auditable, traceable, and compliant at every layer.

At Scraping Pros, we design custom extraction ecosystems, not generic pipelines. Every architecture is tailored to the client’s industry dynamics, the complexity of target sources, required data freshness and volume, and regulatory constraints. This customization transforms scraping from an operational task into a business-critical capability.

3. Distributed Scraping Systems That Think, Not Just Scale

Scaling large-scale data extraction is not just about distributing workload—it is about coordinating behavior across the system.

Most distributed scraping systems fail because they treat nodes as independent actors. In reality, large-scale scraping requires coherent system behavior: requests must align with expected user patterns, identity must be managed consistently, timing must reflect real-world traffic distributions, and the system must adapt dynamically to feedback.

At Scraping Pros, distributed scraping is designed as an adaptive system where request strategies evolve based on response patterns, identity management reflects real network behavior, workloads are distributed based on complexity rather than volume, and system-wide consistency reduces detection risk.

This approach is particularly critical in environments protected by advanced anti-bot systems, where inconsistency—not volume—is the primary detection signal. By embedding intelligence into the architecture itself, scaling reinforces system performance over time rather than introducing fragility.

4. Performance and Cost: The Real Optimization Problem in High-Volume Data Collection

At enterprise scale, web scraping performance cannot be measured by throughput alone. The real question is: how efficiently does the system convert requests into usable data?

Key performance indicators for scalable web scraping shift accordingly:

- Cost per successful request

- Data accuracy and completeness

- Latency impact on business processes

- Infrastructure efficiency under load

In many organizations, costs grow faster than value. This is usually the result of retry-heavy systems, inefficient crawling strategies, or lack of coordination between components.

Scraping Pros addresses this through architecture-level optimization—not superficial tuning. This means adaptive throttling based on real-time response signals, intelligent retry strategies that minimize redundant traffic, incremental crawling to avoid unnecessary data refreshes, and infrastructure profiling to optimize compute allocation.

The result is a system that scales economically, not just technically—where increased volume does not imply proportional cost growth.

5. Availability, Resilience, and Anti-Fragility in Enterprise Scraping Infrastructure

In enterprise environments, downtime is not just a technical issue—it is a strategic risk. Missing data windows can directly impact pricing decisions, competitive analysis, and operational planning.

Understanding availability thresholds is critical for any enterprise scraping infrastructure:

- 99.9% availability still allows significant data gaps

- 99.99% is often the minimum for real-time decision environments

But availability alone is not enough. Systems must be resilient to continuous change in external environments.

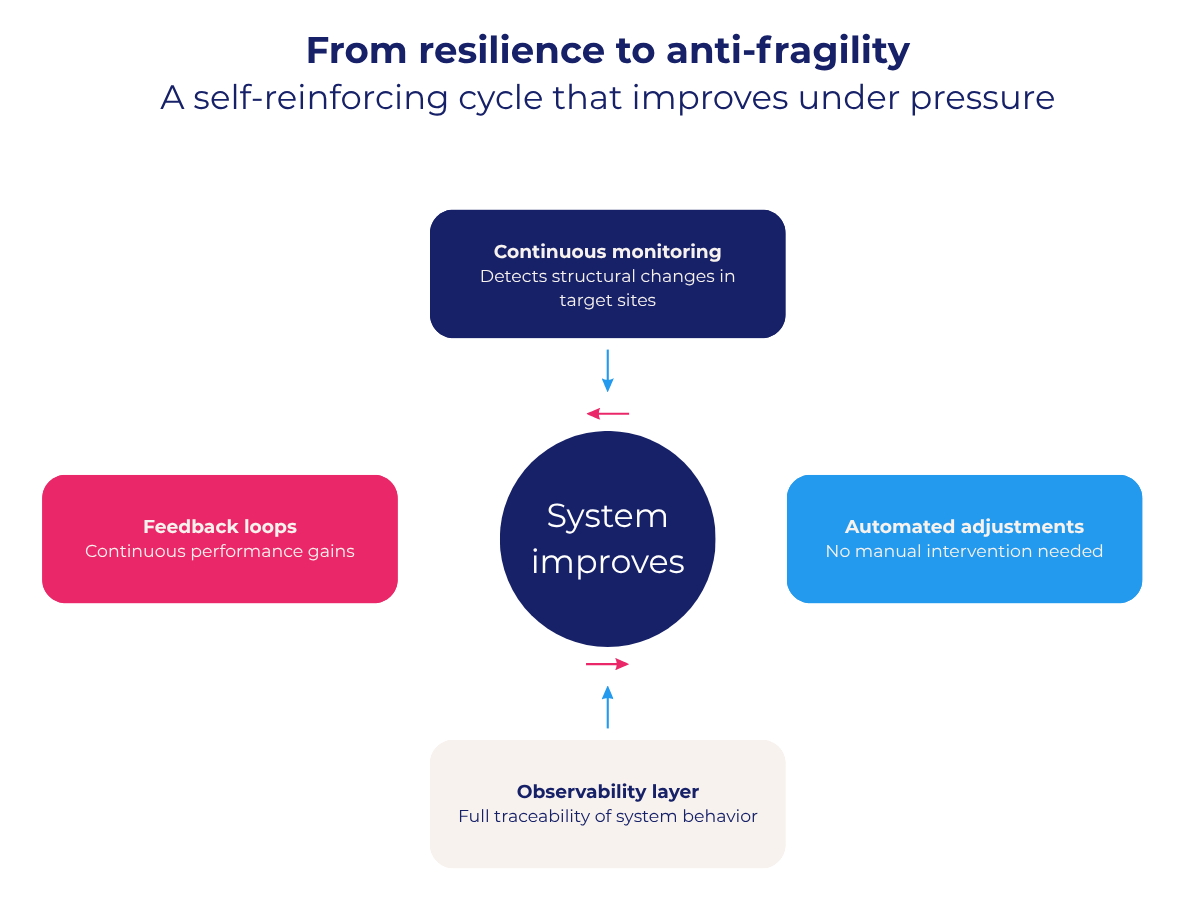

Scraping Pros designs infrastructures that go beyond resilience into anti-fragility: continuous monitoring detects structural changes in target sites, automated adjustments reduce dependency on manual fixes, observability layers provide full traceability, and feedback loops enable continuous performance improvement.

This ensures that the system does not degrade under pressure—it improves through exposure, delivering predictable data pipelines even in highly dynamic and protected environments.

6. Scalable Web Scraping Architecture Best Practices: Case Study in Latin America

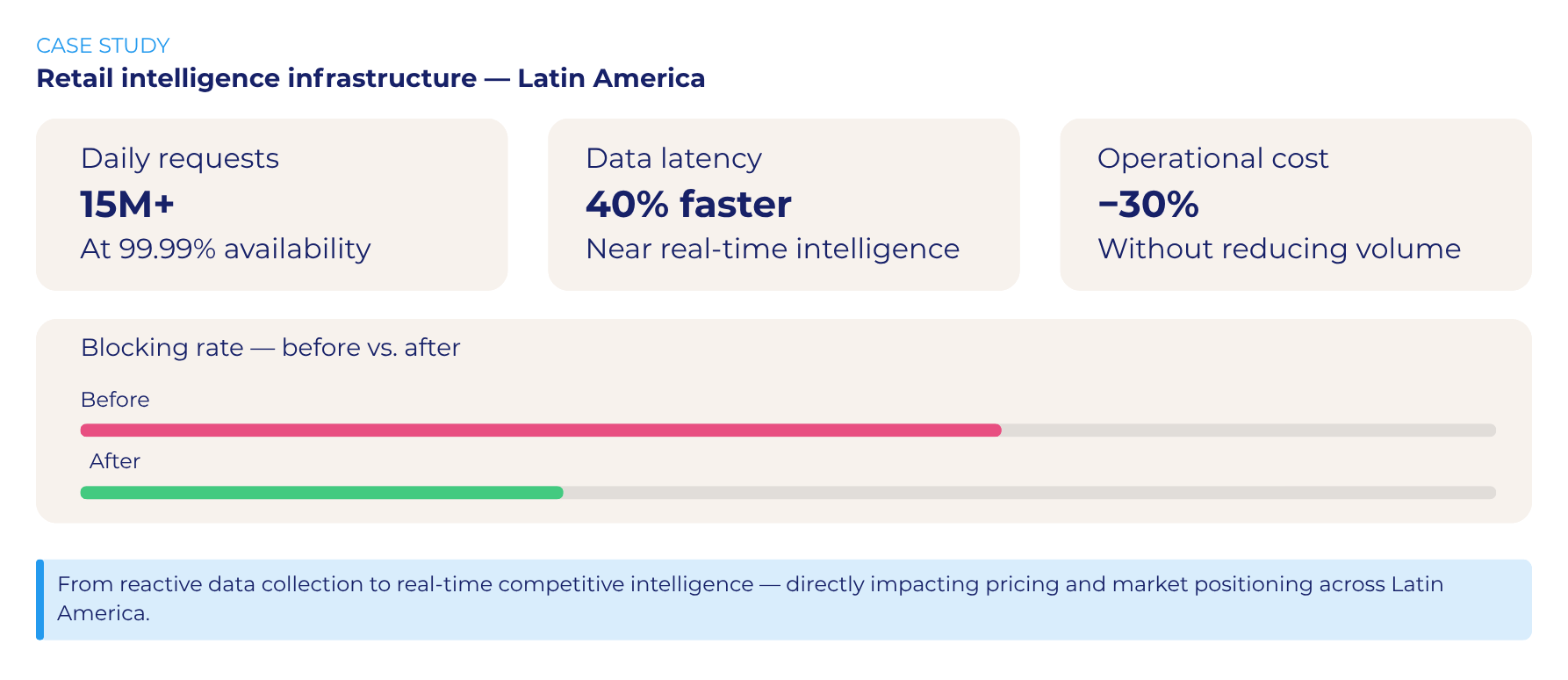

A leading retail group in Latin America approached Scraping Pros after outgrowing their existing scraping setup. Their internal systems could not keep up with the speed and complexity of digital marketplaces across multiple countries.

Objective: Build a scalable infrastructure to monitor pricing, promotions, and availability across regional e-commerce platforms in near real time.

Approach: Scraping Pros designed a custom distributed architecture, combining incremental crawling, selective scraping, and adaptive request strategies aligned with local traffic patterns—fully integrated into the company’s data warehouse and BI environment.

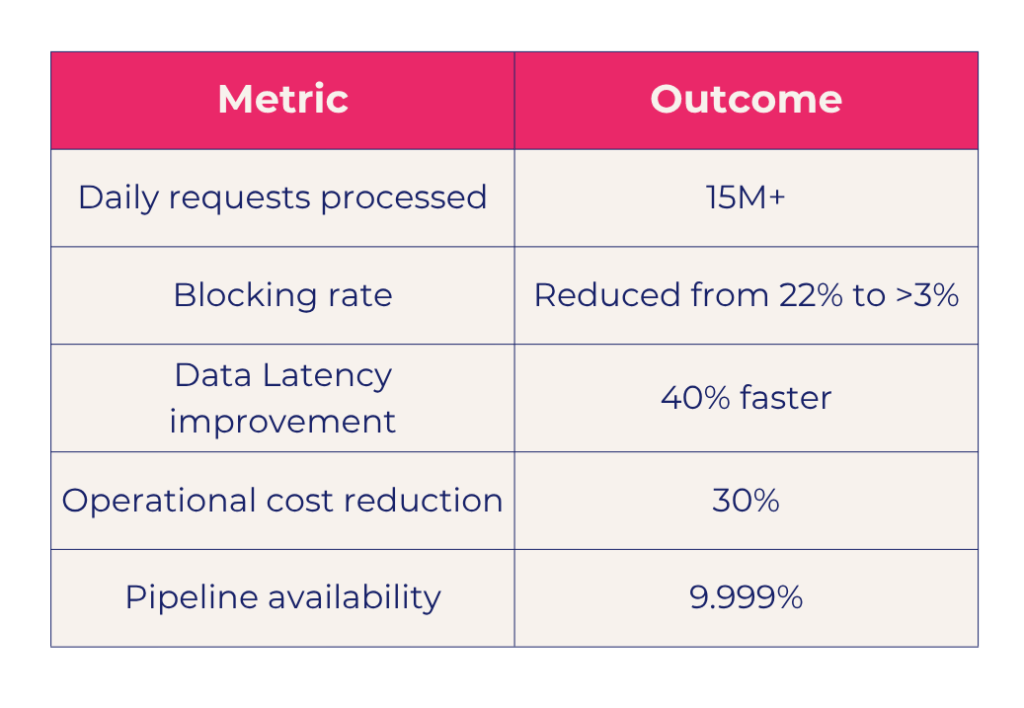

Results following scalable web scraping architecture best practices:

Beyond performance, the key transformation was strategic: the company moved from reactive data collection to real-time competitive intelligence, directly impacting pricing and market positioning.

Frequently Asked Questions About Enterprise-Scale Web Scraping

What is the difference between web scraping scalability and volume? Scalability refers to maintaining stability and performance as systems grow and external conditions change. Volume is simply the number of requests processed—a symptom of scale, not a measure of it.

When does scraping become enterprise scraping infrastructure? When your system processes millions of requests per hour, integrates with data warehouses and BI tools, and requires 99.99%+ availability with full observability and compliance controls.

What are scalable web scraping architecture best practices? Key best practices include adaptive request throttling, distributed identity management, incremental crawling strategies, real-time monitoring, and integrating feedback loops that allow the system to self-optimize over time.

How do distributed scraping systems avoid detection at scale? By ensuring system-wide behavioral consistency—not just distributing volume. Detection is triggered by inconsistency in timing, identity, and traffic patterns, not by volume alone.

Closing: From Enterprise Scraping Infrastructure to Competitive Advantage

Scalable web scraping is not a technical upgrade—it is a strategic transition.

Organizations that rely on generic tools will inevitably face limitations in flexibility, control, and efficiency. Those that invest in custom, enterprise-grade large-scale data extraction infrastructure gain a fundamentally different advantage: the ability to transform external data into continuous, reliable, and actionable intelligence.

At Scraping Pros, we operate at the intersection of data engineering, infrastructure design, and business strategy. Our role is not just to extract data, but to build systems that align with how organizations compete.

Because in today’s markets, leadership is no longer defined by access to data—but by the ability to control, scale, and operationalize it with precision.