AI web scraping has exposed a ceiling that most data teams hit quietly and then work around. The script breaks, someone patches it. The site restructures its layout, someone rewrites the selectors. The error rate creeps up over months — from 5% to 12% to eventually something that the data team just learns to expect and compensate for downstream. The ceiling is roughly 85% extraction accuracy at scale, and it is not a failure of engineering effort. It is a structural limitation of rule-based systems trying to keep up with a web that was never designed to stay still.

Machine learning changes that ceiling. Not by making the rules better — by replacing the rules with pattern recognition that adapts. The jump from 85% to 97% accuracy is not a marginal improvement in data quality. At 10 million records, that 12-point gap is 1.2 million records that no longer need manual review, reprocessing, or silent downstream errors distorting your analysis.

This article explains how ML-powered AI web scraping actually works in production, where it outperforms traditional methods, and where the real operational leverage is — including the cases where traditional scraping remains the right call.

1. Machine Learning Fundamentals in Web Scraping

The core problem that machine learning solves in AI web scraping is structural variability. Traditional extraction relies on fixed selectors: a CSS path, an XPath expression, a set of regex patterns. These work reliably on static sites with stable markup. They break silently on everything else — A/B test variants, personalized layouts, progressive rendering, regional content differences.

An ML-powered AI web scraping extractor does not look for a specific CSS class. It learns what “a product price” looks like across a hundred layout variations: its typical position relative to the product name, its font weight, its proximity to currency symbols, its behavior across mobile and desktop variants. When the site updates its markup, the model does not break — it applies the same learned representation to the new structure.

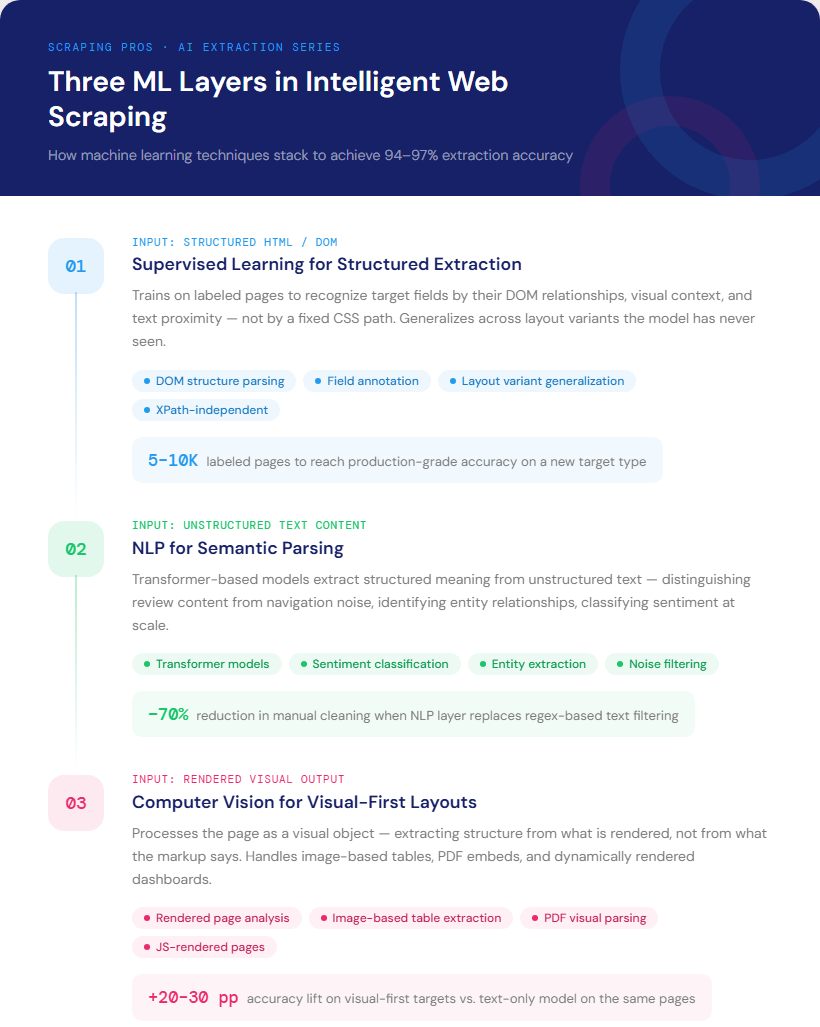

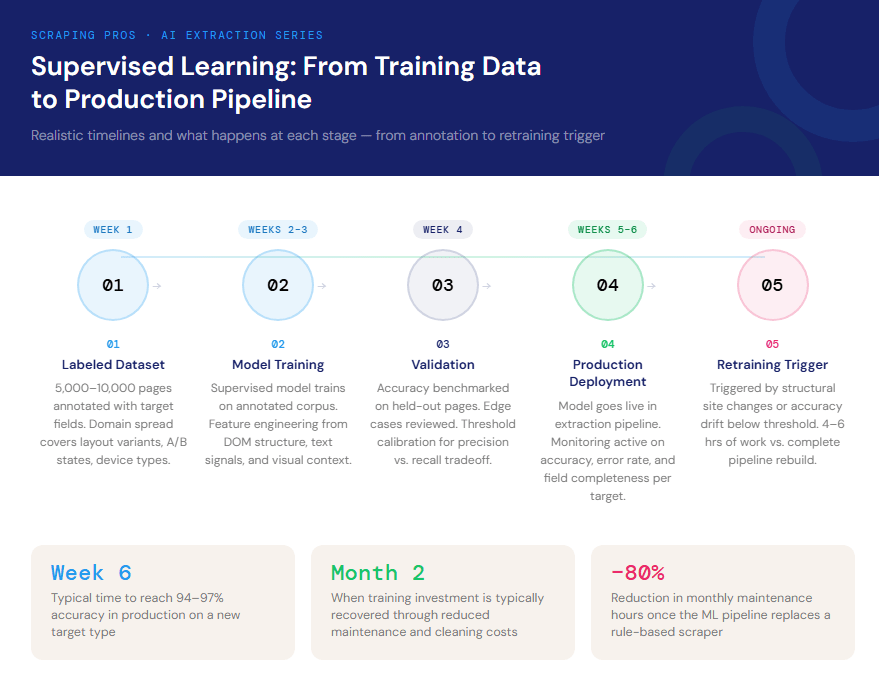

A. Supervised learning for structured extraction. You train a model on labeled examples — pages where the target fields are already annotated. The model learns the relationship between DOM structure, text content, and visual context that reliably identifies each field. In our production pipelines, supervised models trained on 5,000–10,000 annotated pages generalize with 94–97% accuracy across layout variants the model has never seen.

B. NLP for semantic parsing. Not every field has a consistent visual signature. Product descriptions, review sentiment, entity relationships — these require understanding language, not just recognizing layout. can extract structured meaning from unstructured text at a scale and consistency that regex cannot approach.

C. Computer vision for visual-first layouts. Some pages communicate information primarily through position and visual grouping rather than semantic HTML. A price comparison table that renders as an image, a spec sheet embedded in a PDF, a dashboard screenshot — computer vision models treat the page as a visual object and extract structure from what is visible, not from what the markup says.

2. AI Web Scraping vs. Traditional Extraction Methods: An Honest Comparison

The performance gap between AI web scraping and traditional approaches is real, but context matters. shows that poor data quality costs organizations millions annually — a cost that compounds when extraction accuracy degrades silently over months. Here is where each approach actually stands.

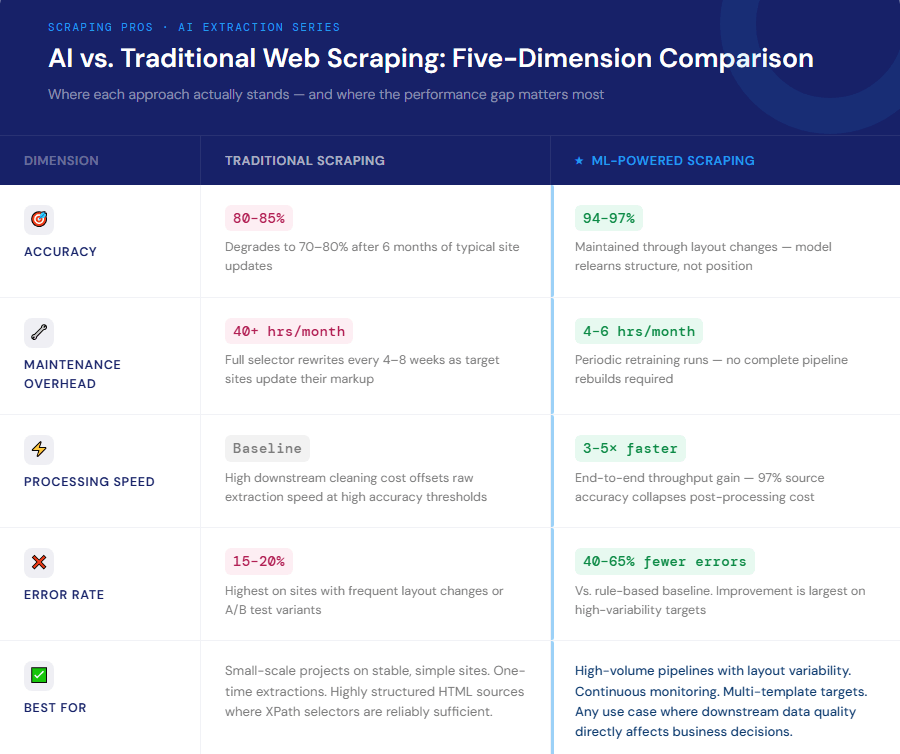

Accuracy under layout variability. Traditional extraction on stable, well-maintained targets: 90–95% accuracy. The same pipeline six months later, after typical site updates: 70–80%. ML-trained models on the same targets maintain 94–97% through layout changes because the model relearns structure rather than depending on it staying fixed.

Speed. ML inference adds computation, but the net effect on end-to-end pipeline speed is positive: 3–5x faster throughput compared to traditional scraping at equivalent accuracy. The reason is that traditional pipelines at high accuracy require extensive post-processing, validation, and manual error correction. When AI web scraping accuracy is 97% at the source, the downstream cost of cleaning collapses.

Error rates. Supervised learning reduces extraction errors by 40–65% compared to rule-based baselines. The improvement is not uniform — it is largest on sites with frequent layout changes and smallest on fully static, well-structured targets.

Maintenance cost. This is where the ROI argument gets clearest. A traditional scraper for a complex e-commerce site requires significant re-engineering every 4–8 weeks as the site evolves. An ML model in an AI web scraping pipeline requires periodic retraining — typically a few hours of labeled data and a retraining run — rather than a complete rebuild.

Where traditional scraping still wins. Small-scale projects on stable, simple sites. One-time extractions where the cost of training data outweighs the benefit of accuracy. Highly structured APIs wrapped in HTML where XPath selectors are trivially reliable. We build ML-powered AI web scraping pipelines at because that is where our clients operate — at volumes and complexity levels where the advantages compound. But we are direct about the cases where a well-maintained traditional scraper is the right answer.

3. AI Web Scraping in Production:

Abstract accuracy numbers are useful. Operational outcomes are more useful. Here are three production cases from our work at Scraping Pros, with realistic timelines and results.

One of our e-commerce clients needed daily price monitoring across 15 competing sites, totaling 2.8 million SKUs. The challenge was not volume — it was layout heterogeneity. Across those 15 sites, there were over 200 distinct product page templates, and three of the sites ran continuous A/B tests that changed the layout on roughly 30% of pages on any given day.

The traditional scraper we had been maintaining for this client was producing 83% accuracy and requiring 40+ hours of monthly engineering work as sites updated.

We trained a supervised AI web scraping model on 8,000 labeled product pages distributed across the site variants. By week six, accuracy reached 96.2% across all templates — including the A/B test variants the model had never explicitly seen. Monthly maintenance dropped to 4–6 hours of retraining when sites made significant structural changes. The model paid for its training cost — labeled data, compute, integration — by the end of month two.

Review and sentiment extraction at media scale

A market research client was collecting product reviews across 40 consumer electronics platforms to build sentiment benchmarks. The extraction itself was not the problem. The problem was downstream: 25–30% of extracted reviews required manual cleaning because the pipeline was pulling navigation text, promotional copy, and UI elements alongside actual review content.

We built an NLP-based classification layer trained to distinguish genuine review content from surrounding page noise. The proportion requiring dropped to under 8%. The data team that had been spending three days per week on cleaning now spends half a day. That delta — 2.5 days of analyst time per week — is where the ROI lived, not in the AI web scraping infrastructure itself.

with structural change detection

A consumer goods client needed to track competitor product launches, discontinuations, and catalog changes across 12 brand websites updated weekly. The operational requirement was not just extracting current data — it was detecting when the structure of the data itself changed, which is the signal that something strategically significant had happened.

We trained an ML model to detect structural anomalies: new product categories appearing, pricing tier changes, bundle configurations not seen before. The model flagged actionable changes with 91% precision, generating false positives at a rate low enough that the client’s analyst team could review everything flagged in under two hours per week. The business value was not the data. It was the signal-to-noise ratio on changes that mattered.

4. Where AI Web Scraping Is Heading Next

The current generation of ML-powered AI web scraping is largely supervised: you provide labeled examples, the model generalizes. The next shift — one we are actively developing in our infrastructure — is toward , where the model learns not from labeled examples but from feedback signals: did this extraction produce valid, consistent data downstream? That removes the annotation bottleneck and makes the AI web scraping system continuously self-improving.

Multimodal extraction is already in early production at our end. Models that process text, layout, and visual content simultaneously handle a class of pages — embedded charts, mixed-media product pages, scanned regulatory documents — that single-modality AI web scraping approaches cannot. The accuracy lift on those targets is significant: 20–30 percentage points above what a text-only model achieves on the same pages.

The question we hear most often from CTOs and heads of data thinking about AI web scraping is timing: when does it make sense to move? The honest answer depends on two variables — volume and variability. High volume, high variability: the ML case is compelling within months. High volume, low variability: traditional scraping is still defensible. Low volume: leveraging pre-trained models rather than building from scratch shifts the economics favorably even at a smaller scale.

The AI web scraping accuracy ceiling that defined the last decade of data engineering is no longer structural. It is a choice.

5. Why Global Enterprises Choose Scraping Pros for AI Web Scraping

builds and manages intelligent AI web scraping infrastructure for organizations that cannot afford pipeline failures, accuracy degradation, or data teams buried in cleaning work. Our client base spans e-commerce, consumer goods, finance, media, and technology — global enterprises and fast-scaling companies that rely on external web data as a core input to pricing decisions, competitive strategy, and market intelligence. From Fortune 500 retailers and multinational consumer goods companies to regional financial institutions and media groups operating across multiple markets, we deliver infrastructure that performs globally and continuously.

Our model is . We handle everything from model training and infrastructure to data cleaning, structuring, and delivery. Clients receive clean, structured datasets — in CSV, JSON, or via API — without managing AI web scraping complexity internally. No engineering overhead, no maintenance cycles, no silent accuracy degradation going unnoticed for weeks.

The three cases above are representative of the outcomes we consistently deliver: accuracy improvements from 83–85% to 94–97%, maintenance costs that drop by 70–80%, and data quality issues that are being absorbed downstream — silently, at real cost — that stop propagating entirely.

For CTOs, Heads of Data, and Chief Strategy Officers evaluating AI web scraping as a strategic capability — whether to support , pricing automation, or large-scale market research — the infrastructure question and the business question are the same question. What would your organization do with data that was 97% accurate instead of 83%, and arrived 3–5x faster?

If that question has an answer worth pursuing, .

Frequently Asked Questions

What is AI web scraping? It uses machine learning models to identify and extract data based on learned patterns rather than fixed selectors — so it adapts when site layouts change instead of breaking.

How accurate is ML data extraction vs. traditional methods? In production on complex, frequently updated sites: 94–97% with ML vs. 80–85% with rule-based approaches. The gap is largest on high-variability targets.

How long does it take to train an extraction model? Typically 2–4 weeks from data annotation to production deployment. ROI generally appears within 6–8 weeks as maintenance and downstream cleaning costs drop.

Does it work on JavaScript-heavy or dynamically rendered sites? Yes — ML models work on the final rendered output, independent of how it was generated. Computer vision variants process the visual state directly.

When does traditional scraping still make sense?

For small-scale projects on stable sites, or one-time extractions where training data cost exceeds the accuracy benefit. At volume and high target variability, compounds quickly.