Safer web practices are no longer optional for enterprise organizations — they are strategic infrastructure. Dynamic pricing, competitive intelligence, regulatory monitoring, and international market analysis all depend on web data. But as scraping becomes a C-level driver, so does exposure to regulatory, reputational, and operational risks.

But as web scraping becomes more established as a driver of C-level decisions, so does exposure to regulatory, reputational, and operational risks. For enterprise organizations, the discussion is no longer technical; it’s institutional.

At Scraping Pros, we work with a clear premise: the future of the sector lies not in collecting more data, but in building a safer web through ethical and responsible web scraping practices that can withstand audits, public scrutiny, and regulatory changes.

Redefining the Concept of “Safer Web”

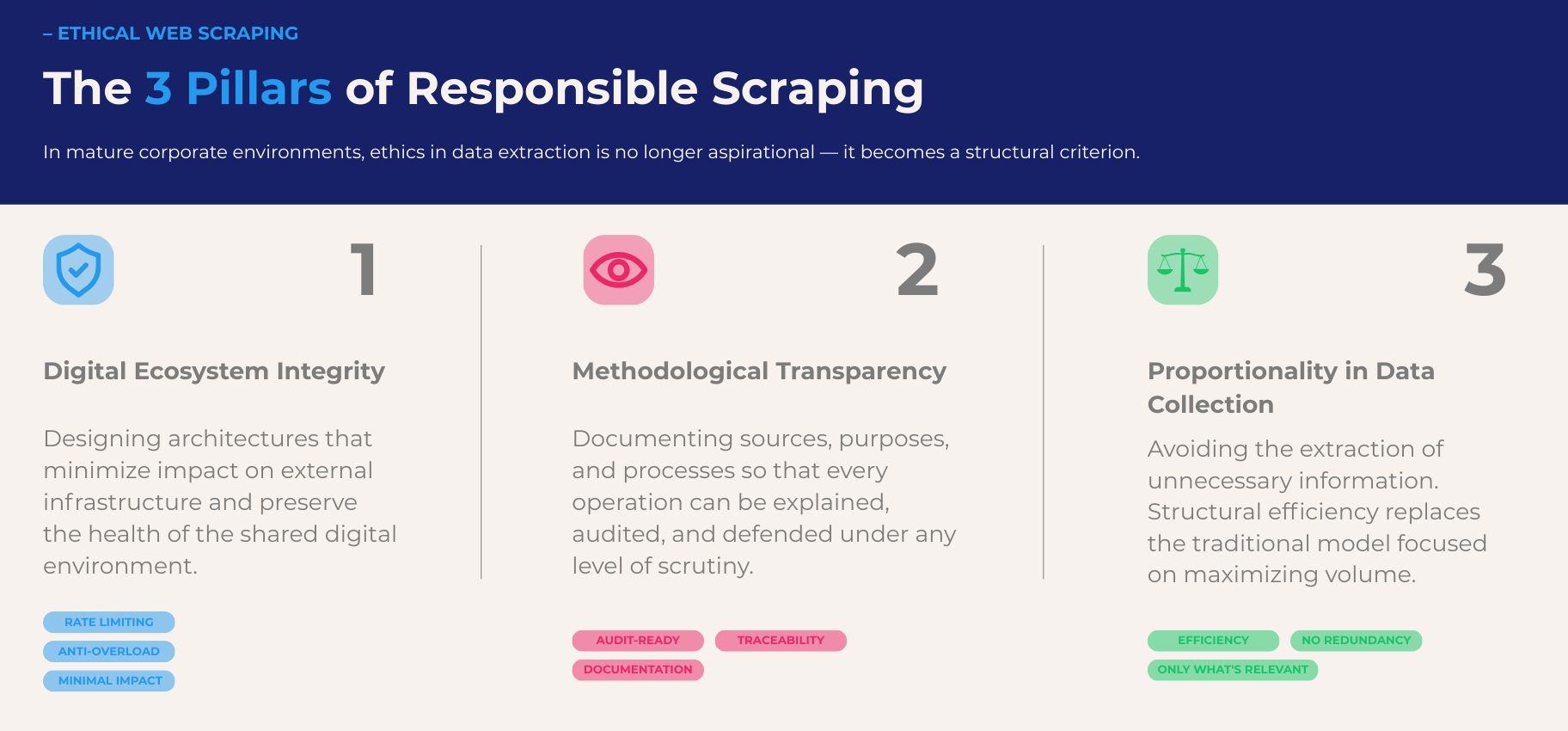

In industry discourse, the term ethical web scraping is often used as an aspirational statement. In a mature corporate environment, it must become a structural criterion.

A safer web does not mean limiting access to public data. It means operating under three integrated principles:

- Respect for the digital integrity of the ecosystem.

- Methodological transparency.

- Proportionality in data collection.

This implies designing architectures that minimize the impact on external infrastructure, document sources and purposes, and avoid collecting unnecessary information. It’s not just regulatory compliance: it’s strategic design.

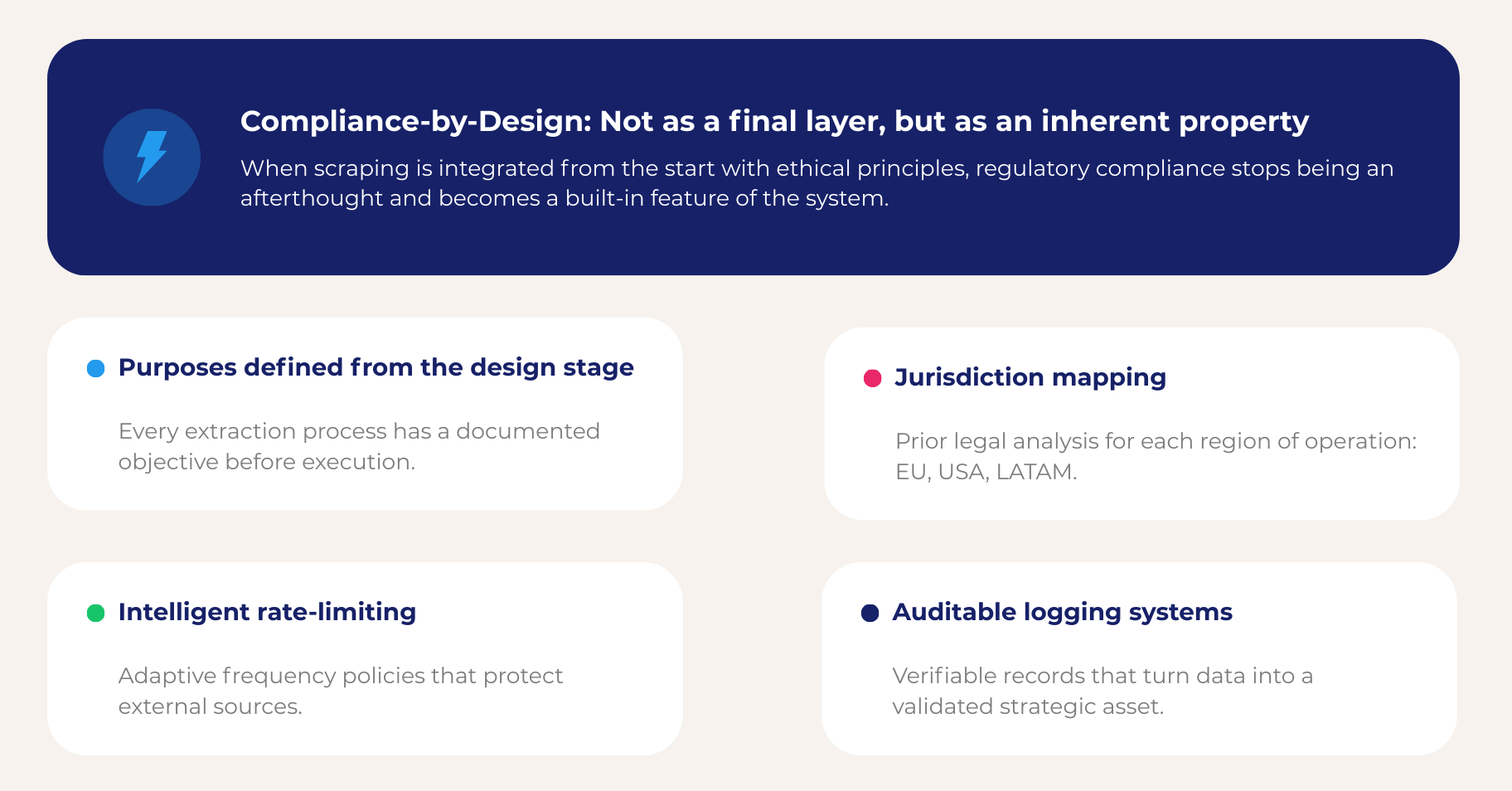

When scraping is integrated from the outset with ethical data extraction principles, compliance ceases to be an afterthought and becomes an inherent property of the system.

Website Scraping as Critical Infrastructure

One of the most frequent mistakes in organizations undergoing digital transformation is treating web scraping as a tactical tool for the data or marketing department. In reality, in enterprise environments, it is critical infrastructure.

If external information impacts pricing, supply chain, business strategy, or acquisition evaluation, then its collection method must be aligned with corporate governance standards.

A robust responsible web scraping model is built from the architecture up. This involves defining specific purposes, mapping relevant jurisdictions, designing intelligent rate-limiting policies — a standard defined in the robots.txt protocol — and establishing auditable logging systems. The goal is not to maximize requests, but to maximize relevance with minimal exposure.

For a CTO or Chief Risk Officer, the difference between ad hoc and strategic scraping lies in traceability. Can each source and each process be explained and documented? If the answer is no, the risk is not technical: it is reputational.

Responsible Web Scraping in Complex Regulatory Environments

Global organizations operate under multiple and dynamic regulatory frameworks. Europe, the United States, and Latin America present different approaches to data, public access, and corporate responsibilities.

In Europe, the General Data Protection Regulation (GDPR) establishes strict standards around data processing, purpose limitation, and accountability — all of which directly inform how responsible scraping architectures must be designed.

In the United States, frameworks such as the California Consumer Privacy Act (CCPA) add additional layers of compliance that organizations must map before any data collection initiative begins.

At Scraping Pros, we work under a model we call Compliance-Ready Architecture. This approach integrates prior risk assessment, structured documentation of sources, verifiable historical records, and update protocols in response to regulatory changes.

This type of design transforms the legal department into a strategic partner rather than an operational obstacle. When governance is integrated from the outset, the organization can scale securely.

The concept of safer web scraping best practices is not theoretical. It is the difference between an initiative that grows with institutional support and one that becomes a contingent liability.

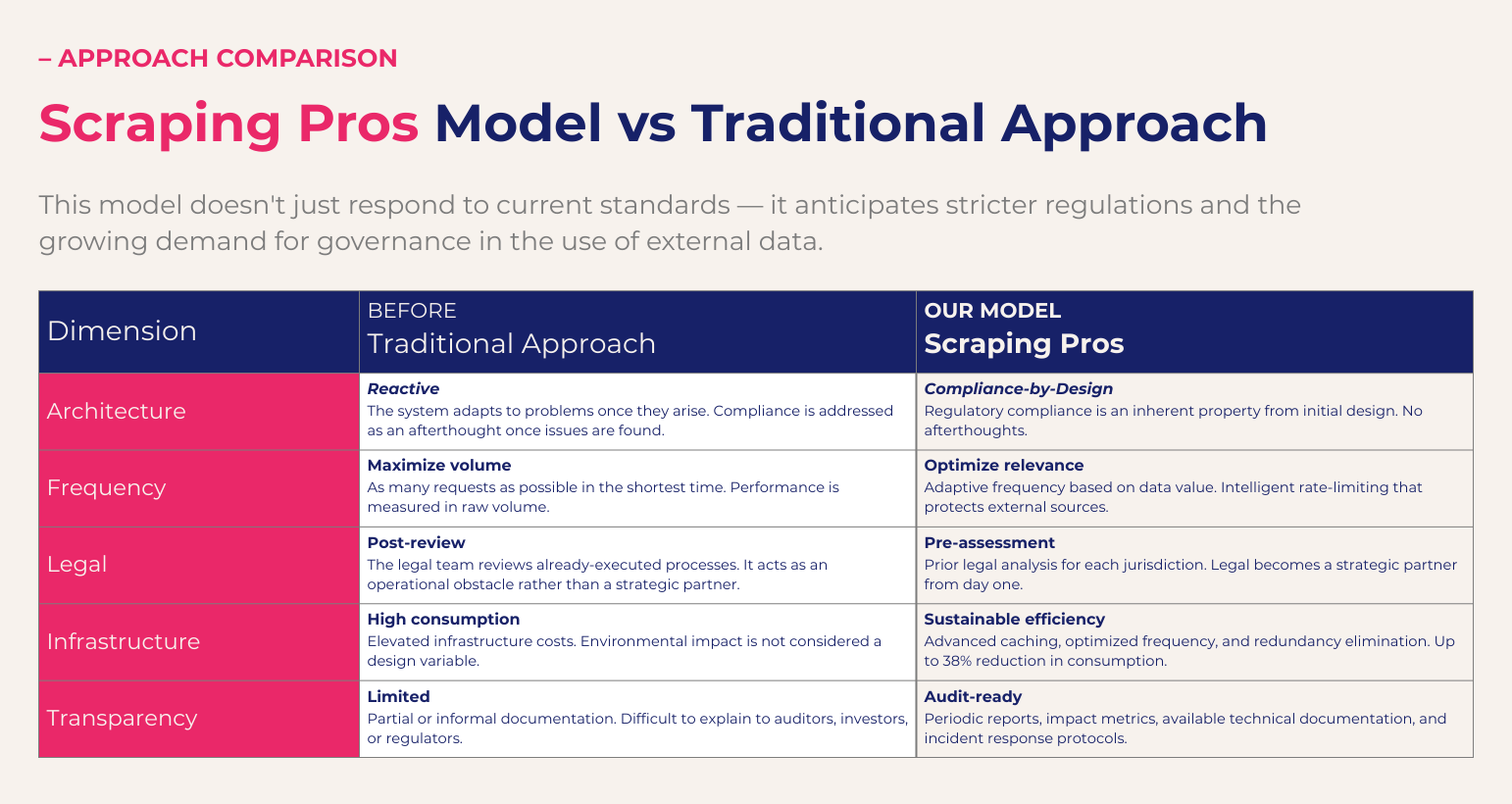

Sustainable Scraping as a Competitive Advantage

There is a dimension that is rarely discussed in the industry: digital sustainability. Every unnecessary request implies energy consumption, infrastructure costs, and strain on external systems.

The traditional approach to scraping focuses on volume and speed. The sustainable approach focuses on structural efficiency.

In our experience, an optimized architecture can significantly reduce consumption without affecting data quality. This is achieved through advanced caching systems, optimized extraction frequency, redundancy elimination, and on-demand scalability.

The result is not only environmental. It’s financial and strategic. Organizations increasingly report sustainability metrics under frameworks such as the GRI Standards — and a sustainable scraping model directly strengthens that ESG narrative.

For the C-suite, this isn’t a technical detail. It’s about alignment between digital strategy and corporate responsibility.

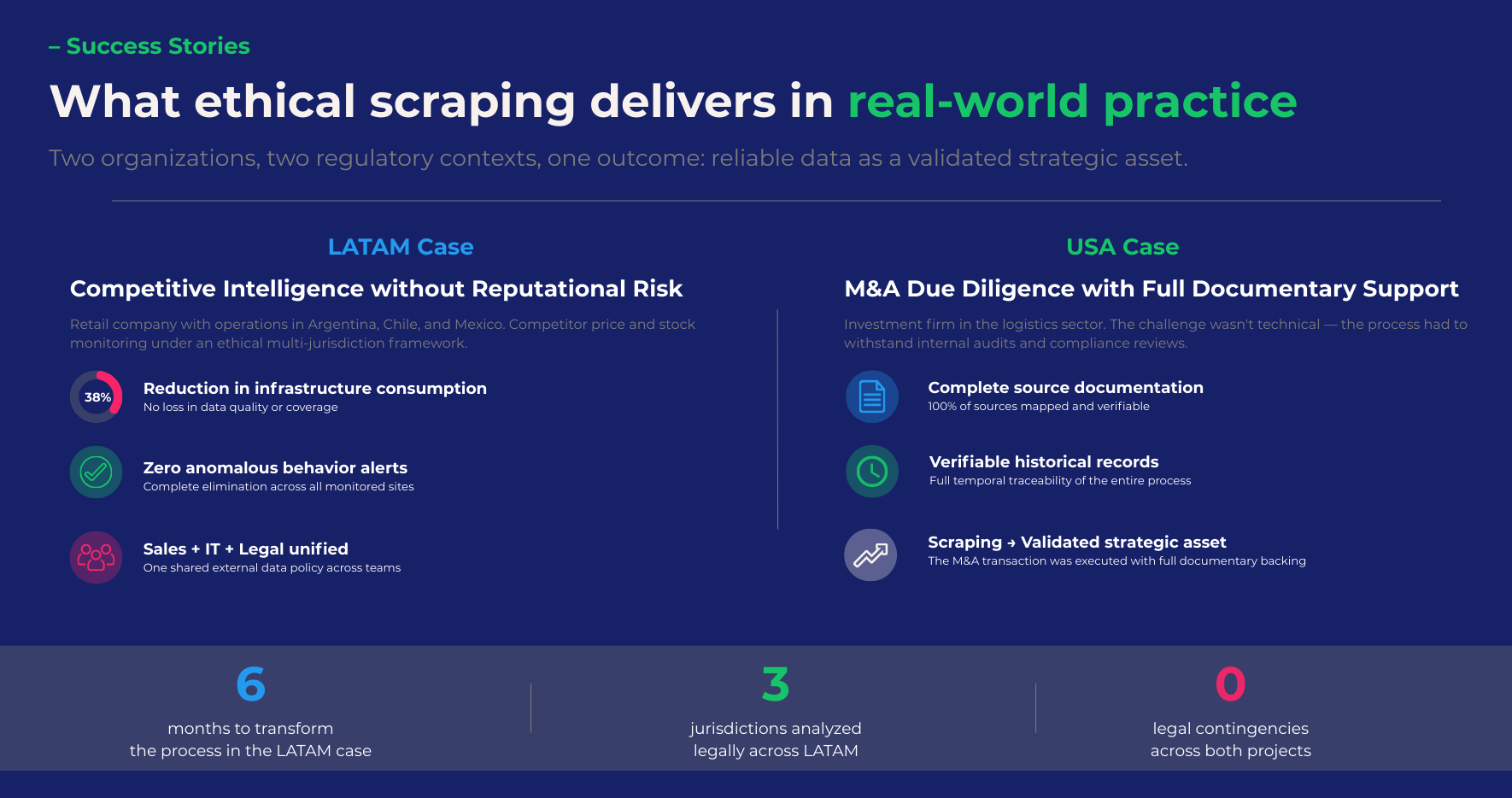

LATAM Case Study: Competitive Intelligence Without Reputational Risk

A retail company with operations in Argentina, Chile, and Mexico needed to monitor competitor prices and stock levels to adjust its regional strategy. The sales team was pushing for an internal, intensive web scraping solution. The legal team expressed concerns related to regulatory compliance and potential reputational exposure.

Scraping Pros redesigned the approach based on safer web scraping practices. A legal analysis was conducted for each jurisdiction, the extraction frequency was optimized to minimize impact, and a complete traceability system with audit-ready logging was implemented.

In six months, the company achieved the following:

A) A 38% reduction in infrastructure consumption.

B) Elimination of alerts for anomalous behavior on monitored sites.

C) Integration of Sales, IT, and Legal under a unified external data policy.

The value lay not only in the information obtained but also in the institutionalization of the process.

USA Case Study: Due Diligence for M&A with Documentary Support

In the United States, an investment firm needed to consolidate public data to evaluate acquisitions in the logistics sector. The challenge wasn’t technical; it was governance.

The platform had to withstand internal audits and compliance reviews. We implemented an ethical web scraping guidelines architecture with comprehensive source documentation, verifiable historical records, and automated protocols for structural changes at monitored sites.

The M&A transaction was executed with complete documentary support for the data collection process. Scraping ceased to be an informal input and became a validated strategic asset.

Transparency as a Strategic Differentiator

In mature markets, trust is a competitive advantage. Organizations that operate under responsible web scraping principles can demonstrate, not just declare, their commitment to digital ethics.

At Scraping Pros, we understand transparency as part of the service. Periodic reports, impact metrics, available technical documentation, and incident response protocols are part of our standard operating procedures.

We don’t promise ethics. We structure them.

This model doesn’t just respond to current standards. It anticipates stricter regulations and the growing demand for governance in the use of external data.

Conclusion

Web scraping is already a structural part of the digital economy. The strategic question is not whether to use it, but how to do so without compromising reputation, compliance, and sustainability.

Building a safer web is not an ideological position. It’s corporate leadership.

At Scraping Pros, we help global organizations scale external intelligence with ethical architecture, traceability, and a long-term vision. Because in the new digital standard, the competitive advantage lies not only in the data obtained, but in how it is obtained.