Is your data team spending more time fixing broken scrapers than analyzing insights? You’re not alone. Companies operating at petabyte scale face the same challenge: traditional web scraper systems that collapse with minimal website changes.

The solution isn’t more servers. It’s adaptive intelligence.

In a digital ecosystem growing exponentially, the limits of traditional web scraping are no longer sufficient. Global companies—from retailers to fintechs—need to extract, process, and analyze information at petabyte scale while maintaining accuracy, adaptability, and ROI.

At Scraping Pros, we understand that web scraper performance is no longer measured solely by speed or volume, but by adaptive intelligence, model efficiency, and predictive data extraction optimization.

What Is Web Scraper Performance Optimization?

Web scraper performance optimization is the process of improving the speed, accuracy, and stability of scrapers using machine learning techniques, network optimization, and automatic adaptability to site changes. According to Google’s guidelines on crawling and indexing, the quality and structure of your data pipelines directly affects how reliably your information can be used — making optimization a critical step for any data-driven business.

1. The Challenge: Why Traditional Web Scraper Systems Fail at Petabyte Scale

Until recently, data teams operated under simple logic: more servers, more scraping. But that equation collapses when facing millions of dynamic pages, asynchronous JavaScript, and increasingly sophisticated anti-bot defenses.

Today, the key question is: How do you maintain web scraper accuracy and consistency when the environment changes hourly?

This is where the concept of intelligent scraping performance emerges — automation combined with machine learning models that anticipate errors, retrain selectors, and optimize crawling paths without human intervention.

“The performance of a modern scraper depends not only on the code, but on its ability to learn from web behavior and optimize data extraction.” — Scraping Pros R&D Team

For a deeper technical foundation, the W3C’s Web Architecture documentation explains how modern web structure has evolved — a key reason static scraping approaches break so frequently today.

2. Machine Learning Web Scraper: What Petabyte-Scale Optimization Really Means

Working at petabyte scale means operating at a level where traditional scraping strategies are no longer viable. It’s not just about collecting information — it’s about processing, cleaning, and classifying billions of records from multiple sources in real time.

Scraping Pros has developed distributed extraction pipelines combining machine learning, intelligent deduplication, and parallel orchestration systems capable of handling 1 to 3 PB per month in global e-commerce, price intelligence, and trend monitoring projects.

Real-World Case Study: International Retailer

One project required continuous collection of prices, availability, and reviews for 120 million products across 45 countries. The system automatically adapted to HTML changes, local languages, and different time zones.

Using ML-based semantic pattern detection models and efficient scraping algorithms:

- Computational costs per page reduced by 37%

- Data delivered in near real time

- Scraper efficiency improved significantly across all markets

Real-World Case Study: Financial Intelligence

In the financial intelligence sector, heterogeneous sources (reports, open APIs, press releases, regulatory databases) were unified. Scraping Pros’ ML pipelines consolidated 900 TB of historical data + 2 PB of new data in 60 days — with an error rate below 0.02%.

3. Strategy 1 — Smart Selectors: AI-Powered Data Extraction

While traditional systems rely on fixed rules or static XPaths, our approach uses AI selectors based on large language models. These interpret the semantic context of HTML, automatically adjust selectors, and reduce extraction errors by up to 40%.

This is directly aligned with how modern CSS selectors and DOM APIs work — understanding the structure semantically rather than relying on brittle position-based targeting.

4. Strategy 2 — Predictive Scraping for Automated Data Collection

Our predictive scraping models analyze historical blocking and performance patterns to anticipate problems. For example, if a site changes its layout every two weeks, the system proactively adapts — reducing maintenance times and avoiding critical interruptions in the data chain.

5. Strategy 3 — Adaptive Throttling Algorithms

Thanks to adaptive throttling scraping algorithms, our web scraper adjusts request frequency based on server load, reducing the risk of detection and optimizing network costs by up to 22%.

The result: more stable, resilient, and economically sustainable pipelines.

6. Strategy 4 — The 4-Layer Intelligent Pipeline

Unlike monolithic architectures, Scraping Pros builds modular and self-adjusting infrastructures. Each project behaves like a living system, learning from traffic, content type, and business context.

| Layer | Function |

|---|---|

| Extraction Layer | Distributed agents with AI selectors and dynamic rendering |

| Validation Layer | Real-time anomaly detection and self-retraining |

| Storage Layer | Embedding-based deduplication and ML compression |

| Delivery Layer | Normalization via REST/GraphQL API with version control |

7. Strategy 5 — Intelligent Anti-Bot Technology

While other providers rely on rotating proxies or manual solutions, our ML agents analyze behavioral signals (headers, timings, scroll patterns) in real time and automatically adapt their fingerprint.

This technology achieves a 94% anti-bot bypass rate — one of the highest in the industry. For context on why anti-bot systems have become so sophisticated, Cloudflare’s bot management research offers a comprehensive overview of how detection mechanisms work.

8. Strategy 6 — Accelerated JavaScript Rendering

With renderers distributed in specialized containers, we achieve average speeds of 2.3 seconds compared to 3.8–4.1 seconds for main competitors. This directly translates into more pages per hour and faster time-to-insight.

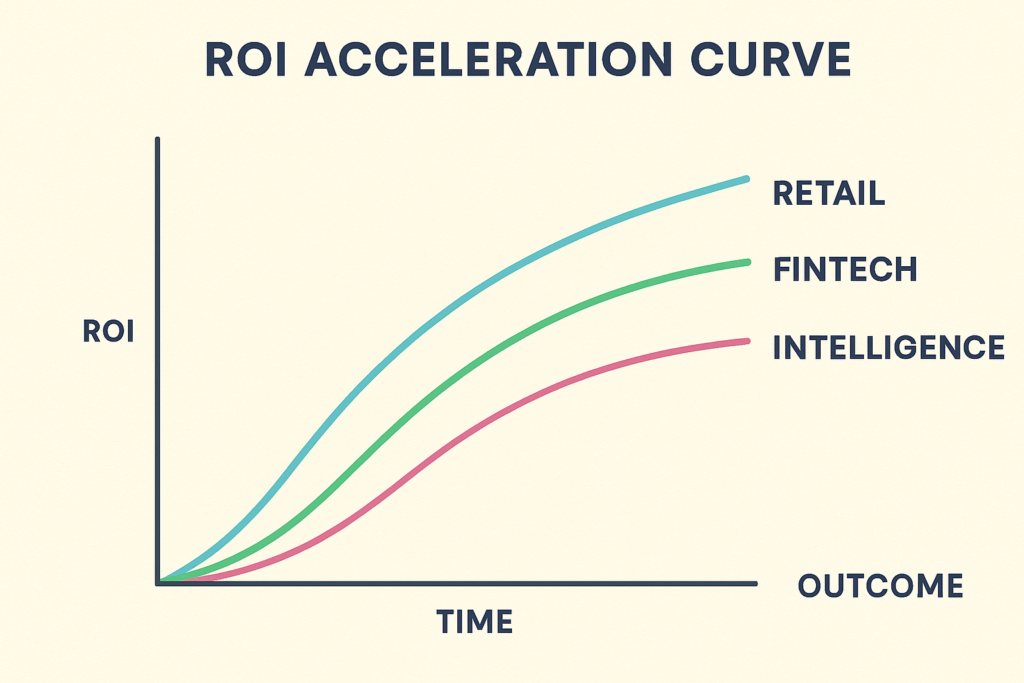

9. Strategy 7 — Time-to-Value Framework

Technical scraping performance is meaningless if it doesn’t translate into economic impact. At Scraping Pros, we design each project based on a measurable Time-to-Value model:

| Phase | Duration | Key Result | Success Metric |

|---|---|---|---|

| Discovery | 3–5 days | KPIs and quick-wins identified | >3 quick-wins |

| MVP | 10–15 days | First actionable dataset | First data-driven decision |

| Monetization | 20–30 days | Data-driven decisions | Visible ROI breakeven |

| Scaling | 45–60 days | Positive ROI documented | >120% ROI confirmed |

Case Study: Retail Pricing Intelligence (LATAM)

Client: Top 5 retailer in Mexico — 2,400 stores + e-commerce

Challenge: Monitor 12M SKUs daily across 23 competitors

Results:

- Discovery (4 days): 89% of price changes happened 8–11 a.m. and 7–9 p.m. Quick-win: focus on 33% volatile SKUs = 67% scope reduction

- MVP (12 days): 4M SKUs/day scraped, 94% extraction accuracy, 12,400 pricing changes detected daily

- Monetization (28 days): 28% reduction in non-competitive price incidents, additional $2.4M in Q4 2024

- Scaling (55 days): Expanded to Colombia and Chile — $180K investment vs. $4.2M value = 2,333% ROI

A key insight: we built a fake-discount detection system that identified “previously $X, now $Y” where X was never the real price — generating an additional $840,000 beyond simple competitor price matching.

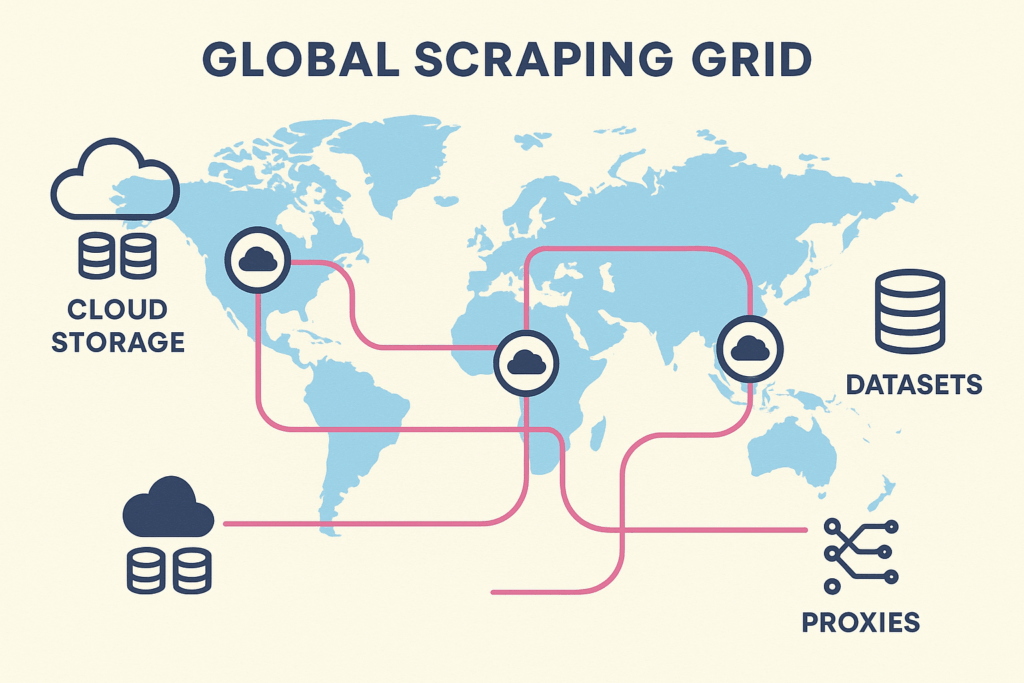

10. Global Scraping Performance: Intelligent Geodistribution

Scraping Pros employs distributed nodes across the Americas, Europe, and Asia with balancing algorithms that dynamically allocate resources based on load and geolocation.

This model reduces latency by 35% compared to centralized architectures and enables compliance with local data protection regulations. Our ML models also incorporate geofeatures — learning which regions offer greater stability or shorter response times.

In LATAM projects, the platform adapted to local dialects, detecting linguistic variations (“shirt” vs. “t-shirt”) and improving dataset quality with truly contextual intelligence.

Internal Resources

Looking to get started or learn more about our approach?

- 🔗 Explore our Web Scraping Plans and Pricing — Growth, Business, and Enterprise tiers

- 🔗 Try our Free API — Test our web scraper infrastructure before committing

- 🔗 Browse our Solutions — Industry-specific scraping pipelines

The Future of Web Scraping: Interoperability and Responsible AI

Next-generation web scraper systems will integrate three vectors:

A) Continuous Learning — Models that retrain themselves and adapt to environmental changes

B) Semantic Interoperability — Integration with private APIs, metaverses, and IoT ecosystems

C) Ethical Scraping — Compliance with terms of service and transparency in automated data collection

At Scraping Pros, we work to ensure that AI not only improves scraping performance but also data trust and traceability.

“The future of scraping isn’t about more speed. It’s about more intelligence, context, and ethics in every byte.” — Scraping Pros Global Team

Conclusion: Web Scraper Performance Redefined

Web scraping performance is no longer defined by how many pages are downloaded, but by how quickly and accurately data is converted into business value.

Scraping Pros leads this change with a unique combination of machine learning, intelligent automation, and strategic vision.

Ready to optimize your data extraction?

Extracting more than 100M pages per month? Schedule a free technical audit of your web scraper infrastructure and discover how ML-powered optimization can reduce costs and accelerate your time-to-insight.

FAQ: Web Scraper Performance & ML Optimization

1. What is web scraping performance optimization?

It is the process of improving the speed, accuracy, and stability of scrapers using machine learning techniques, network optimization, and automatic adaptability to site changes.

2. How does machine learning help improve scraping?

ML models detect patterns, predict crashes, and automatically adjust selectors, reducing errors and manual maintenance in automated data collection.

3. What sets Scraping Pros apart from competitors?

Scraping Pros combines state-of-the-art AI, modular infrastructure, and transparent ROI metrics, delivering faster and more stable results at a lower cost.

4. How long does it take to show value?

On average, less than 15 days. Our Time-to-Value methodology prioritizes incremental results from the MVP phase.

5. Does Scraping Pros operate globally?

Yes — Latin America, Europe, and North America, with support across multiple time zones and regulatory contexts.

6. Which industries benefit most from intelligent scraping?

Retail, fintech, market intelligence, academic research, and data companies monitoring dynamic or sensitive information.

7. How does Scraping Pros ensure ethical and legal data extraction?

We implement compliance protocols (robots.txt, frequency limits, data anonymization) and internal transparency audits.